Revisiting Sharpness-Aware Minimization: A More Faithful and Effective Implementation

arXiv cs.AI / 3/12/2026

💬 OpinionIdeas & Deep AnalysisModels & Research

Key Points

- The paper analyzes why the standard practical implementation of Sharpness-Aware Minimization (SAM) works and introduces eXplicit Sharpness-Aware Minimization (XSAM) to address its limitations for single-step and multi-step ascent.

- It shows that the gradient at the ascent point, when applied to the current parameters, better approximates the direction toward the local maximum within the neighborhood than the local gradient alone.

- XSAM explicitly estimates the ascent direction to improve the approximation and designs a search space that effectively leverages gradient information from multi-step ascent, with negligible additional computational cost.

- The approach provides a unified formulation applicable to both single-step and multi-step settings and demonstrates consistent improvements over existing SAM variants in experiments.

- Extensive experiments indicate XSAM offers superior generalization performance with only modest computational overhead compared to prior methods.

Related Articles

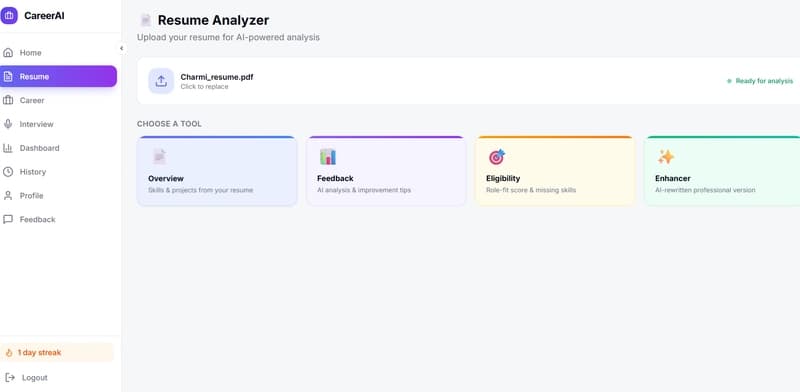

🚀 Resume Feedback Is Easy — Until You Try Making It Context-Aware

Dev.to

The Open-Source Voice AI Stack Every Developer Should Know in 2026

Dev.to

15 Best Lightweight Language Models Worth Running in 2026

Dev.to

![[M] LILA-E8, LILA-Leech: The Geometric Intelligence Manifesto. Why Sam Altman’s "Parameter Golf" is already over.](/_next/image?url=https%3A%2F%2Fmedia2.dev.to%2Fdynamic%2Fimage%2Fwidth%3D800%252Cheight%3D%252Cfit%3Dscale-down%252Cgravity%3Dauto%252Cformat%3Dauto%2Fhttps%253A%252F%252Fdev-to-uploads.s3.amazonaws.com%252Fuploads%252Farticles%252Fwt599bhy2we4ctk91wgm.png&w=3840&q=75)

[M] LILA-E8, LILA-Leech: The Geometric Intelligence Manifesto. Why Sam Altman’s "Parameter Golf" is already over.

Dev.to

Agent Diagnostics Mode — A Structured Technique for Iterative Prompt Tuning

Dev.to