BEAVER: A Training-Free Hierarchical Prompt Compression Method via Structure-Aware Page Selection

arXiv cs.CL / 3/23/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- BEAVER introduces a training-free hierarchical prompt compression method that for long-context LLMs shifts from token pruning to structure-aware page-level selection to reduce inference latency while preserving information fidelity.

- The approach maps variable-length contexts into dense page-level tensors using dual-path pooling to maximize hardware parallelism during inference.

- A hybrid planner combines semantic and lexical dual-branch selection with sentence smoothing to maintain discourse integrity across long documents.

- Empirical evaluations on four long-context benchmarks show BEAVER achieving comparable performance to state-of-the-art methods (e.g., LongLLM Lingua) with a notable 26.4x reduction in latency at 128k context sizes, and strong fidelity in multi-needle retrieval on the RULER benchmark.

- The authors provide their code at the stated URL, enabling practical adoption of the method.

💡 Insights using this article

This article is featured in our daily AI news digest — key takeaways and action items at a glance.

Related Articles

Interactive Web Visualization of GPT-2

Reddit r/artificial

From infrastructure to AI: how Alibaba Cloud powers the global ambitions of Chinese companies

SCMP Tech

[R] Causal self-attention as a probabilistic model over embeddings

Reddit r/MachineLearning

The 5 software development trends that actually matter in 2026 (and what they mean for your startup)

Dev.to

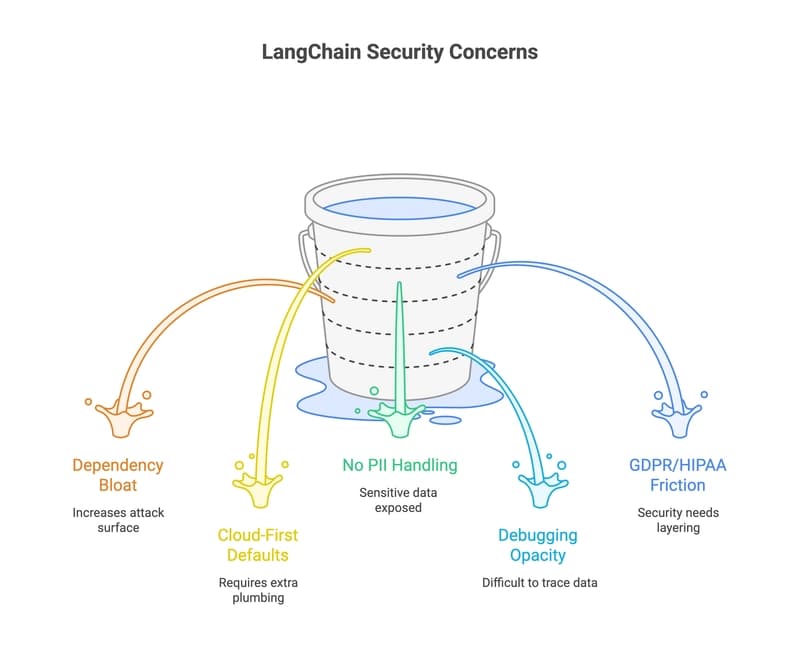

33 LangChain Alternatives That Won't Leak Your Data (2026 Guide)

Dev.to