OmniCompliance-100K: A Multi-Domain, Rule-Grounded, Real-World Safety Compliance Dataset

arXiv cs.CL / 3/17/2026

📰 NewsIdeas & Deep AnalysisModels & Research

Key Points

- OmniCompliance-100K is a large, rule-grounded safety dataset for LLMs, containing 12,985 rules and 106,009 associated real-world compliance cases.

- The dataset spans 74 regulations and policies across domains including security, privacy, content safety, financial security, medical device risk mgmt, educational integrity, and human rights protections.

- It was collected using a web-searching agent to ensure real-world relevance and addresses gaps in prior ad-hoc safety data taxonomies.

- Benchmarking experiments across different model scales reveal insights that can guide future LLM safety research and development.

Related Articles

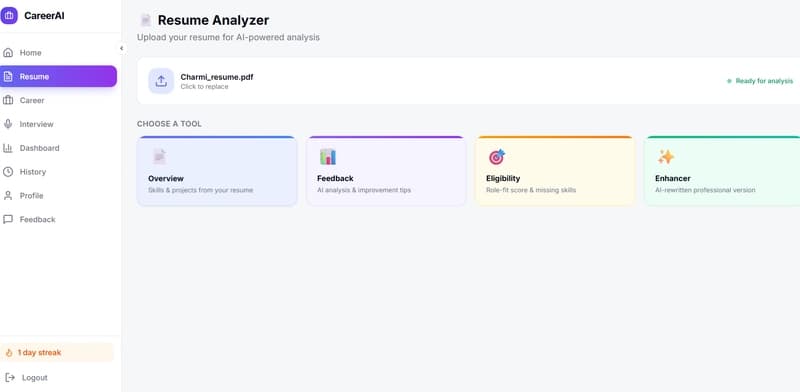

🚀 Resume Feedback Is Easy — Until You Try Making It Context-Aware

Dev.to

The Open-Source Voice AI Stack Every Developer Should Know in 2026

Dev.to

15 Best Lightweight Language Models Worth Running in 2026

Dev.to

![[M] LILA-E8, LILA-Leech: The Geometric Intelligence Manifesto. Why Sam Altman’s "Parameter Golf" is already over.](/_next/image?url=https%3A%2F%2Fmedia2.dev.to%2Fdynamic%2Fimage%2Fwidth%3D800%252Cheight%3D%252Cfit%3Dscale-down%252Cgravity%3Dauto%252Cformat%3Dauto%2Fhttps%253A%252F%252Fdev-to-uploads.s3.amazonaws.com%252Fuploads%252Farticles%252Fwt599bhy2we4ctk91wgm.png&w=3840&q=75)

[M] LILA-E8, LILA-Leech: The Geometric Intelligence Manifesto. Why Sam Altman’s "Parameter Golf" is already over.

Dev.to

Agent Diagnostics Mode — A Structured Technique for Iterative Prompt Tuning

Dev.to