PREF-XAI: Preference-Based Personalized Rule Explanations of Black-Box Machine Learning Models

arXiv cs.LG / 4/22/2026

📰 NewsModels & Research

Key Points

- The paper argues that XAI explanations should be tailored to individual users’ goals, preferences, and cognitive constraints, rather than using one-size-fits-all, model-centric approximations.

- It introduces PREF-XAI, reframing explanation generation as a preference-driven selection problem where multiple candidate explanations are evaluated against user-specific criteria.

- The proposed method generates rule-based explanation candidates and learns user preferences using formal preference learning, elicited via ranking and modeled with an additive utility function inferred through robust ordinal regression.

- Experiments on real-world datasets indicate the approach can reconstruct user preferences from limited feedback, surface the most relevant explanations, and even discover explanation rules users did not initially consider.

- By connecting XAI with preference learning, the work motivates more interactive and adaptive explanation systems that improve over time with user input.

Related Articles

No Free Lunch Theorem — Deep Dive + Problem: Reverse Bits

Dev.to

Salesforce Headless 360: Run Your CRM Without a Browser

Dev.to

RAG Systems in Production: Building Enterprise Knowledge Search

Dev.to

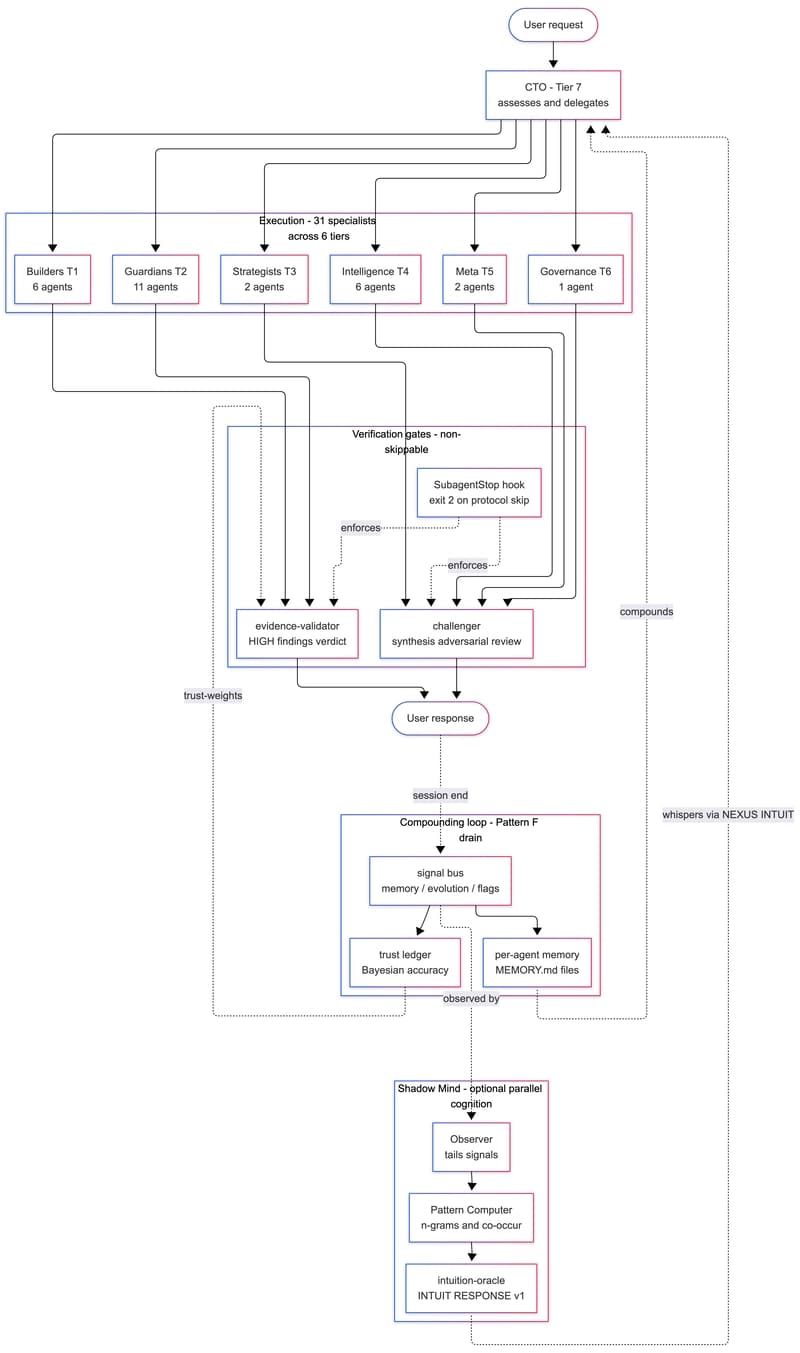

We Built a 31-Agent AI Team That Hires Itself, Critiques Itself, and Dreams

Dev.to

gpt-image-2 API: ship 2K AI images in Next.js for $0.21 (2026)

Dev.to