| submitted by /u/muyuu [link] [comments] |

GitHub - intel/auto-round: A SOTA quantization algorithm for high-accuracy low-bit LLM inference, seamlessly optimized for CPU/XPU/CUDA, with multi-datatype support and full compatibility with vLLM, SGLang, and Transformers.

Reddit r/LocalLLaMA / 5/1/2026

📰 NewsDeveloper Stack & InfrastructureTools & Practical UsageModels & Research

Key Points

- Intel’s GitHub repository intel/auto-round introduces a state-of-the-art quantization algorithm aimed at enabling high-accuracy, low-bit inference for LLMs.

- The approach is designed to be seamlessly optimized across different hardware backends, including CPU, Intel XPU, and CUDA-enabled GPUs.

- It supports multiple datatypes, targeting broader model and deployment compatibility.

- auto-round claims full compatibility with major inference frameworks and model ecosystems, including vLLM, SGLang, and Hugging Face Transformers.

Related Articles

Black Hat USA

AI Business

Every handle invocation on BizNode gets a WFID — a universal transaction reference for accountability. Full audit trail,...

Dev.to

I tracked my referral sources for 30 days. AI chatbots are beating Google.

Dev.to

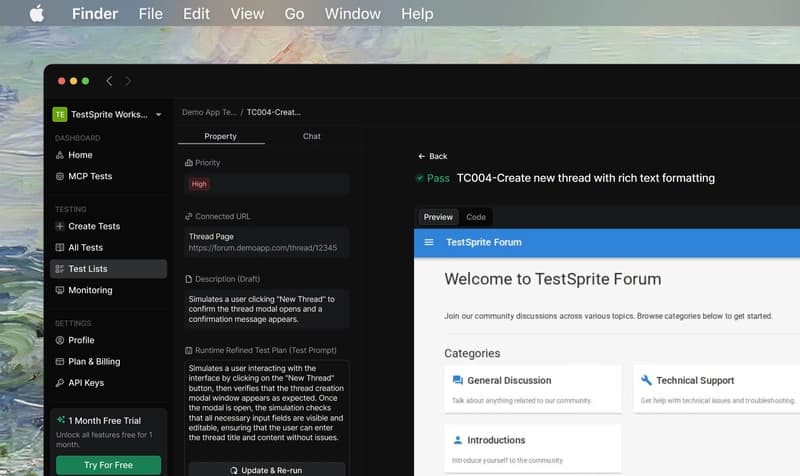

TestSprite: Review Mendalam dari Developer Indonesia — Lokalisasi, Tanggal, dan Mata Uang Rupiah

Dev.to

When AI Agents Trade Autonomously: Building Economic Actors That Never Sleep

Dev.to