Auditing demographic bias in AI-based emergency police dispatch: a cross-lingual evaluation of eleven large language models

arXiv cs.CL / 5/5/2026

📰 NewsSignals & Early TrendsIdeas & Deep AnalysisModels & Research

Key Points

- The paper presents a cross-lingual auditing framework for assessing demographic bias in LLM-assisted emergency police dispatch by turning the Police Priority Dispatch System into a five-level ordinal classification task.

- Using 19,800 outputs from 11 frontier LLMs across multiple scenario pairs, demographic cue types (religious appearance, gender, race), and two languages (English and Mandarin Chinese), the study finds bias appears mainly when incident severity is ambiguous and largely diminishes when priority is clear from call content.

- Bias strength varies by demographic axis, with the largest effects for religious appearance, then gender, and then race, indicating that fairness risks are not uniform across attributes.

- The work shows cross-lingual asymmetries: gender bias is amplified in Mandarin Chinese while race bias is more pronounced in English, and some scenarios even show counter-directional effects that complicate simple stereotype-amplification explanations.

- The authors argue that bias is an interaction effect among demographic signals, contextual ambiguity, and language, and they provide the framework as scalable pre-deployment infrastructure for agencies evaluating candidate models.

💡 Insights using this article

This article is featured in our daily AI news digest — key takeaways and action items at a glance.

Related Articles

The 55.6% problem: why frontier LLMs fail at embedded code

Dev.to

Four CVEs in a week, all the same shape: when agents execute LLM-generated code

Dev.to

Healthcare AI Is Absorbing Institutional Knowledge It Can't Actually Hold

Reddit r/artificial

Samsung halts all home appliance sales in China as pivot to AI accelerates

SCMP Tech

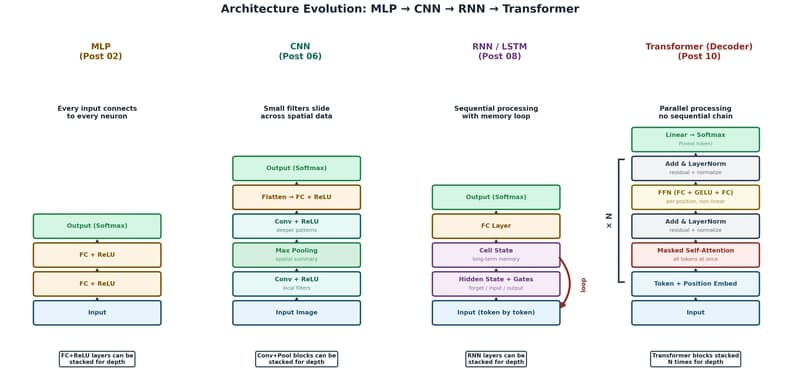

The Transformer: The Architecture Behind Modern AI

Dev.to