| A bit of context. I was coding up a little html tower defense game where you can alter the path by placing additional waypoints. My setup: 32gb ram with 16gb vram 5070 ti. Using AesSedai/Qwen3.6-35B-A3B-GGUF IQ4_XS on LM Studio with OpenCode. I've graduated from one-shot vibe-coding prompts. The spec for this game was complicated enough that it couldn't have been done in LM Studio so I tried OpenCode. The project was chugging along, Qwen3.6 35b-a3b was getting things done when 27b dropped. Naturally I had to try it. Only problem is that I couldn't use any of the Q4 models due to vram issues, so I dropped to an IQ3_M model from mradermacher/Qwen3.6-27B-i1-GGUF. I had worries that IQ3_M would have been too much compression but it did fine and was even able to find a difficult bug that IQ4_XS version of Qwen3.6 35b-a3b couldn't. They say dense models handle compression better than MoE models. Is that the reason for this? What are other people's experience with 35b-a3b vs 27b versions of Qwen3.6? Using LM Studio, I got 50-60 tokens per second with Qwen3.6 35b-a3b (AesSedai/Qwen3.6-35B-A3B-GGUF IQ4_XS) but the prompt processing gets real slow sometimes. I got 40ish tokens per second with mradermacher/Qwen3.6-27B-i1-GGUF IQ3_M but it was decent speed throughout. How are people's experiences with these two models at 16gb vram? Anyone doing actual work with IQ3 models of 27b? Oh, the Waypoint Tower Defense game is done and can be played on htmlbin. The save/load doesn't seem to work on their site, but if you download the file and open it in browser, it'll work fine. It's a self-contained single html game. Mean to be like minesweeper but for tower defense. [link] [comments] |

Switched from Qwen3.6 35b-a3b to Qwen3.6 27b mid coding and it's noticeably better!

Reddit r/LocalLLaMA / 4/27/2026

💬 OpinionSignals & Early TrendsTools & Practical UsageModels & Research

Key Points

- A developer running local coding models in LM Studio switched mid-project from Qwen3.6 35B-A3B (GGUF IQ4_XS) to Qwen3.6 27B (GGUF IQ3_M) due to VRAM constraints.

- They reported that the 27B IQ3_M model produced noticeably better results for their complex HTML tower defense coding task, including finding a hard bug that the 35B IQ4_XS model couldn’t.

- Performance differed: the 35B IQ4_XS model reached about 50–60 tokens/sec but had occasional slowdowns in prompt processing, while the 27B IQ3_M model ran around ~40 tokens/sec more consistently.

- The post raises the question of whether dense 27B models compress better than MoE 35B variants, and asks others for their experiences comparing Qwen3.6 35B vs 27B on 16GB VRAM systems.

- The author also shares a playable self-contained HTML version of the game, noting that save/load may require downloading the file and opening it locally in a browser.

💡 Insights using this article

This article is featured in our daily AI news digest — key takeaways and action items at a glance.

Related Articles

Black Hat USA

AI Business

Can Geometric Deep Learning lead eliminate the need of "Brute Force" pre-training [D]

Reddit r/MachineLearning

Product Photo Editing with AI: A Complete Guide for Small Businesses

Dev.to

I Spent Weeks Reverse-Engineering OpenClaw. Here's What Nobody Tells You.

Dev.to

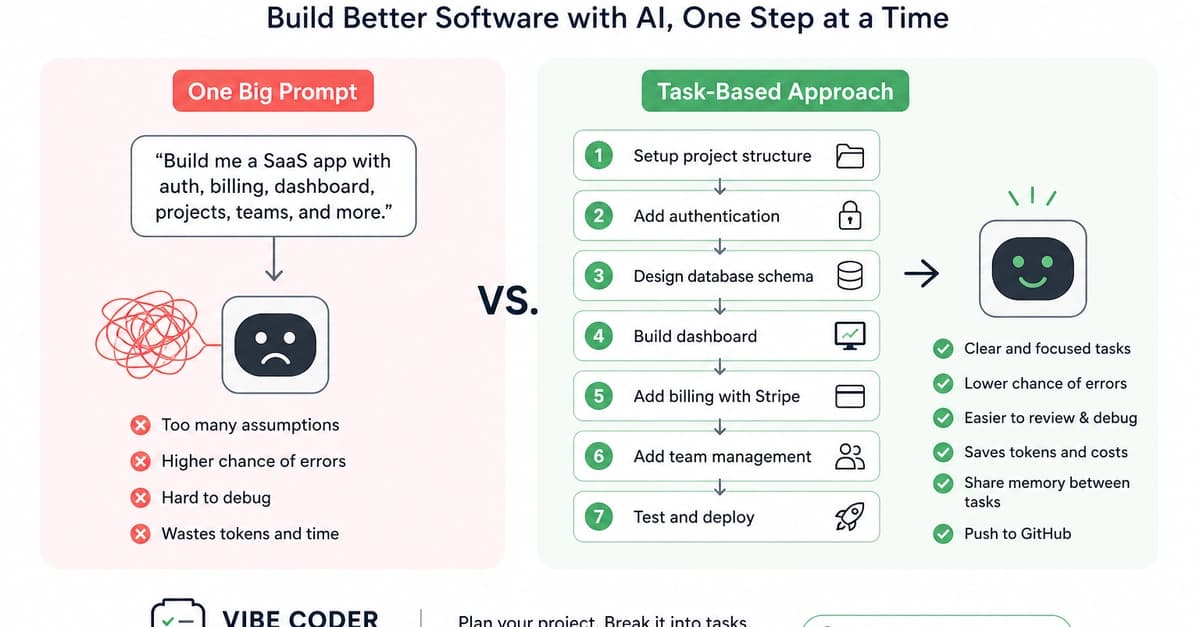

Why Task-Based Vibe Coding Is Better for Building Real Software Products

Dev.to