GenAssets: Generating in-the-wild 3D Assets in Latent Space

arXiv cs.CV / 4/28/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- GenAssets introduces a 3D latent diffusion approach to generate high-quality 3D traffic-participant assets from in-the-wild LiDAR and camera data, aiming to improve realism and diversity for multi-sensor autonomy simulation.

- The paper argues that prior neural-rendering reconstruction methods are too slow and often only render well near the original viewpoints, while diffusion methods struggle on sparse, occluded driving scenes.

- A core contribution is a “reconstruct-then-generate” pipeline: occlusion-aware neural rendering builds a high-quality latent space, and then a diffusion model generates assets within that latent space.

- The authors report that their method outperforms existing reconstruction and generation baselines, enabling more diverse and scalable content creation for simulation workflows.

- The work is positioned as an enabler for safer end-to-end development of autonomous systems by generating complete geometry and appearance for simulated actors.

Related Articles

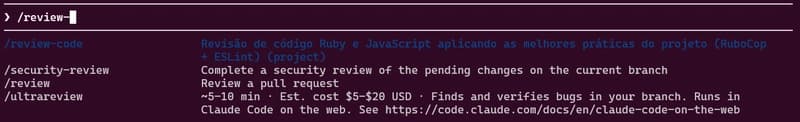

how to use skills from Claude Code A.K.A Claudinho.

Dev.to

Behind the Scenes of a Self-Evolving AI: The Architecture of Tian AI

Dev.to

Meet Tian AI: Your Completely Offline AI Assistant for Android

Dev.to

UK to develop AI hardware plan

Tech.eu

Copilot Cowork | The Control Plane for Long-Running AI Work | A Rahsi Framework™

Dev.to