ParaRNN: Large-Scale Nonlinear RNNs, Trainable in Parallel

Apple Machine Learning Journal / 4/23/2026

📰 NewsDeveloper Stack & InfrastructureSignals & Early TrendsModels & Research

Key Points

- ParaRNNは、再帰型ニューラルネットワーク(RNN)の推論効率の高さを活かしつつ、これまでボトルネックだった“逐次計算”の性質を突破して、大規模RNNの学習を可能にする新しいアプローチです。

- Appleの研究者による進展により、RNNの学習を大幅に効率化でき、数十億パラメータ級のスケールでの学習が初めて現実的になります。

- 学習可能性が広がることで、LLM設計においてRNN系のアーキテクチャ選択肢が増え、特に計算・メモリ制約のある環境での展開に適した選択肢が増える見込みです。

- 注意(Transformer)ベースに比べてRNNはメモリと計算コスト面で有利になりやすい一方、スケーリング課題の解消が実用上の価値を押し上げる内容です。

Recurrent Neural Networks (RNNs) are naturally suited to efficient inference, requiring far less memory and compute than attention-based architectures, but the sequential nature of their computation has historically made it impractical to scale up RNNs to billions of parameters. A new advancement from Apple researchers makes RNN training dramatically more efficient — enabling large-scale training for the first time and widening the set of architecture choices available to practitioners in designing LLMs, particularly for resource-constrained deployment.

In ParaRNN: Unlocking Parallel Training…

Continue reading this article on the original site.

Read original →Related Articles

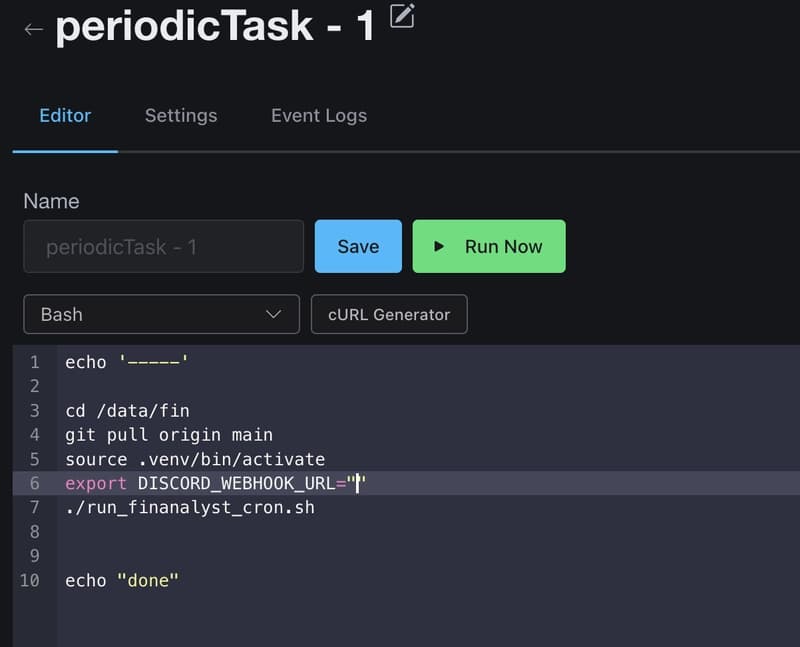

How I Use GitHub Copilot + RapidForge to Generate Daily Stock Ideas

Dev.to

Big Tech firms are accelerating AI investments and integration, while regulators and companies focus on safety and responsible adoption.

Dev.to

Anthropic CVP Run 3 — Does Claude's Safety Stack Scale Down to Haiku 4.5?

Dev.to

Mend.io Releases AI Security Governance Framework Covering Asset Inventory, Risk Tiering, AI Supply Chain Security, and Maturity Model

MarkTechPost

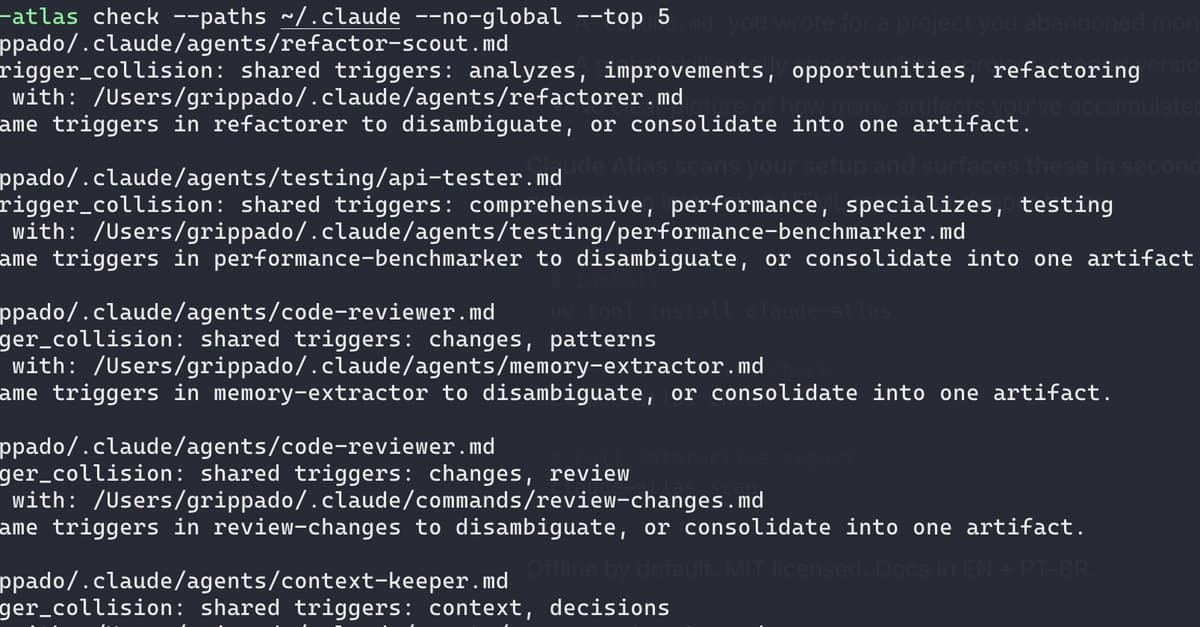

I audited my own Claude Code setup and found 21 issues in 72 artifacts

Dev.to