Heuristic Classification of Thoughts Prompting (HCoT): Integrating Expert System Heuristics for Structured Reasoning into Large Language Models

arXiv cs.AI / 4/15/2026

💬 OpinionIdeas & Deep AnalysisModels & Research

Key Points

- The paper identifies two key weaknesses of LLM-based reasoning for complex problems: inherently stochastic, non-deterministic token sampling and a static separation between reasoning and dynamically retrieved knowledge.

- It proposes HCoT (Heuristic-Classification-of-Thoughts), a prompting/control schema that integrates a heuristic classification model into the LLM generation loop to guide and stabilize reasoning trajectories.

- HCoT uses a structured problem space with reusable abstract solution components so that the model can dynamically choose reasoning strategies rather than relying on fixed, decoupled decision-making.

- Experiments on two inductive reasoning tasks with ill-defined search spaces show HCoT outperforming prior prompting approaches such as Tree-of-Thoughts and Chain-of-Thoughts.

- On the well-structured 24 Game task, HCoT improves token efficiency versus Tree-of-Thoughts with breadth-first search and provides a favorable accuracy–cost trade-off on a Pareto frontier.

Related Articles

The myth of Claude Mythos crumbles as small open models hunt the same cybersecurity bugs Anthropic showcased

THE DECODER

Claude Opus 4.7 vs 4.6: What Actually Changed and What Breaks on Migration

Dev.to

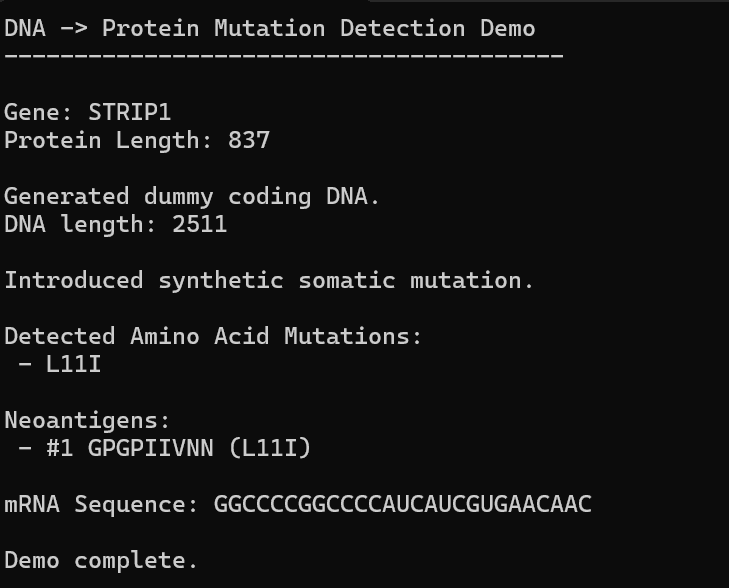

AI, Hope, and Healing: Can We Build Our Own Personalized mRNA Cancer Vaccine Pipeline?

Dev.to

The Hotel AI Visibility Crisis: Why AI Cites Review Sites More Than Your Own Website

Dev.to

Automate Your Literature Review: Build a Custom AI Pipeline

Dev.to