What do your logits know? (The answer may surprise you!)

arXiv cs.AI / 4/14/2026

💬 OpinionSignals & Early TrendsIdeas & Deep AnalysisModels & Research

Key Points

- The paper studies whether information stored in transformer internals—specifically intermediate representations like low-dimensional projections (“tuned lens”) and the final top-k logits—can be probed to extract more than what model outputs directly show.

- Using vision-language models, it presents a systematic comparison of how much task-relevant and task-irrelevant information remains at different representational “bottlenecks” during compression from the residual stream to the logits.

- The authors find that even seemingly accessible bottlenecks based on top logit values can leak task-irrelevant information about image queries.

- In some cases, the leakage from top logit–based signals matches the amount of information obtainable from direct projections of the full residual stream.

- The work highlights an information-leakage risk for model owners, where probing by users could reveal data intended to be inaccessible, motivating stronger controls and evaluation of internal-exposure attack surfaces.

Related Articles

Small NSFW model for chatbot

Reddit r/LocalLLaMA

ChatGPT for Nurses: Prompts That Help You Document, Communicate, and Study

Dev.to

I Added a Stopwatch to My AI in 1 LOC Using the Livingrimoire While Corporations Need a Year

Dev.to

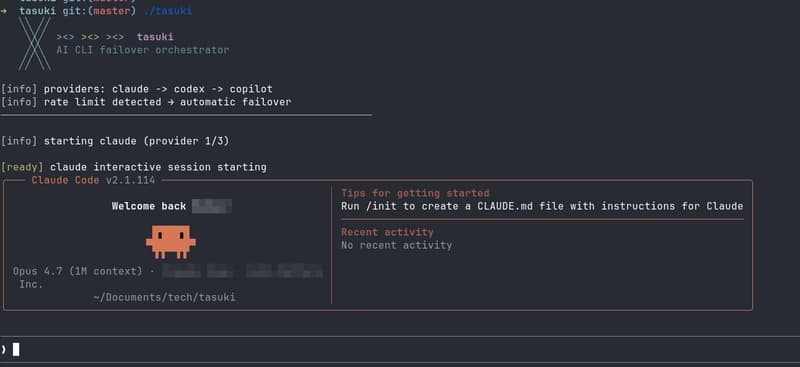

Built tasuki — an AI CLI Orchestrator that Seamlessly Hands Off Between Tools

Dev.to

I built a GNOME extension for Codex with local/remote history, live filters, Markdown export, and a read-only MCP server

Reddit r/artificial