Reducing Peak Memory Usage for Modern Multimodal Large Language Model Pipelines

arXiv cs.CV / 4/21/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- Multimodal large language models store many vision tokens in the key-value (KV) cache during inference, which makes memory consumption a major bottleneck as models scale.

- Prior KV-cache compression approaches often run only after all inputs are processed, leaving the prefill stage with very high peak memory usage.

- The paper argues that MLLMs have structural regularities and representational redundancy that can be leveraged to limit memory growth throughout inference.

- It proposes a sequential, structure-aware input-compression mechanism that compresses the KV cache during the prefill stage to enforce a fixed memory budget.

- Experiments indicate substantial peak memory reduction with only minimal degradation in generative performance, improving the practicality of multimodal inference.

Related Articles

Agent Package Manager (APM): A DevOps Guide to Reproducible AI Agents

Dev.to

3 Things I Learned Benchmarking Claude, GPT-4o, and Gemini on Real Dev Work

Dev.to

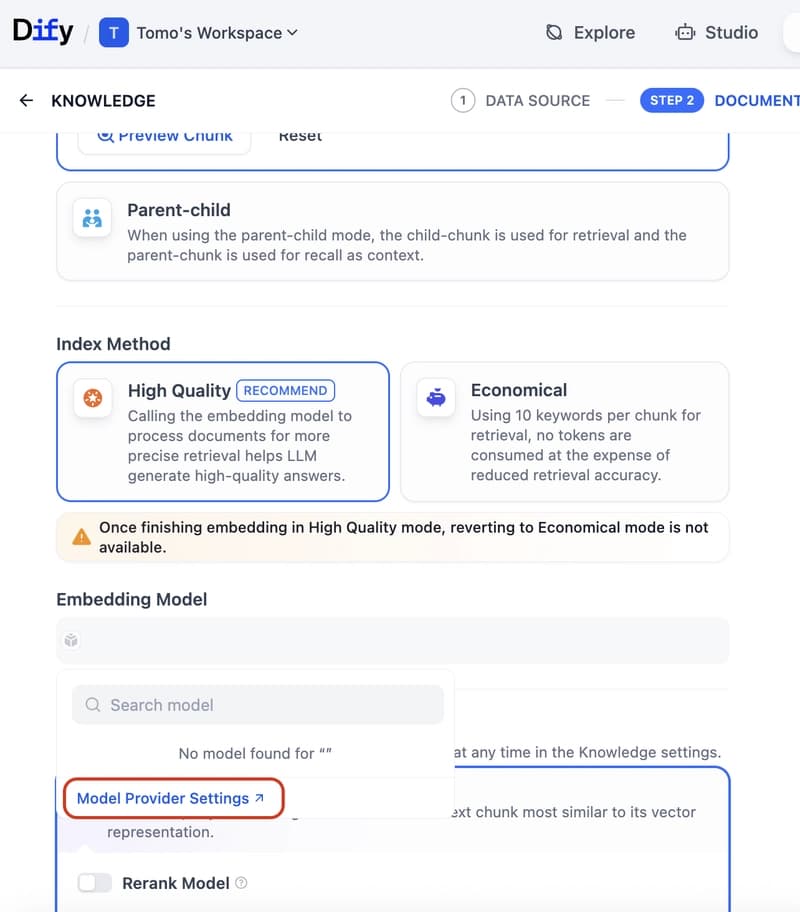

Dify Now Supports IRIS as a Vector Store — Setup Guide

Dev.to

How to build a Claude chatbot with streaming responses in under 50 lines of Node.js

Dev.to

Open Source Contributors Needed for Skillware & Rooms (AI/ML/Python)

Dev.to