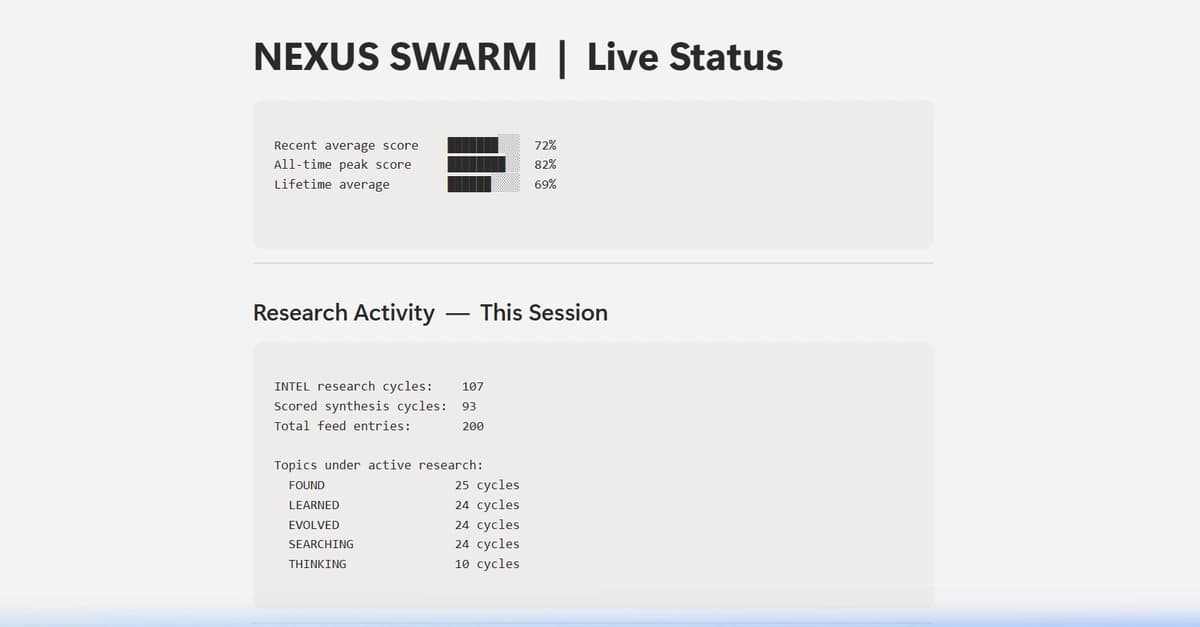

You trained a machine learning model to predict something binary: fraud or not fraud, churn or stay, disease or healthy. Now comes the question every data scientist faces: Is it actually good?

That's where evaluation comes in. And here's the thing — most people do it wrong. They stop at accuracy, declare victory, and deploy. Then the model underperforms in production because they missed something crucial about their data or their use case.

This guide walks you through the full evaluation toolkit: metrics, curves, and the thinking behind each one. By the end, you'll know exactly what to measure and why.

The Confusion Matrix: What It Really Tells You

Before metrics come numbers. Before numbers comes the confusion matrix — a simple 2x2 table that breaks down everything your model did.

- True Positives (TP): Your model said "yes" and was right.

- False Positives (FP): Your model said "yes" but was wrong.

- True Negatives (TN): Your model said "no" and was right.

- False Negatives (FN): Your model said "no" but was wrong.

That's it. Everything else is math on top of these four numbers. But understanding which mistakes matter for your problem is crucial. In fraud detection, a false positive (flagging legitimate transactions) is annoying. A false negative (missing actual fraud) costs money. In medical screening, they have opposite costs.

The confusion matrix tells you if your model is making the right kind of mistakes for your use case.

Accuracy Is a Trap

You've probably heard this before, but it bears repeating: accuracy is worthless if your classes are imbalanced.

Accuracy = (TP + TN) / (TP + TN + FP + FN)

If 99% of your data is actually negative, a model that always predicts "negative" will have 99% accuracy and be completely useless. It never catches the positive class at all.

This is why you need metrics that focus on specific cells of the confusion matrix.

Precision, Recall, and the F1 Score

These three metrics show up everywhere because they actually tell you something:

- Precision = TP / (TP + FP). Of the cases your model flagged as positive, how many actually were? High precision means few false alarms.

- Recall = TP / (TP + FN). Of the actual positive cases out there, how many did you catch? High recall means you're not missing positives.

- F1 Score = 2 x (Precision x Recall) / (Precision + Recall). The harmonic mean of precision and recall — useful when you care equally about both.

You almost never want both to be perfect. In fraud detection, you'd rather have high recall (catch fraudsters) and accept some false positives (annoy a few customers). In spam filtering, you'd rather have high precision (don't delete legitimate emails) and accept that spam sneaks through.

Your evaluation should reflect what your use case needs.

ROC Curves and AUC: The Complete Picture

Here's where things get visual. A ROC curve answers this question: As I change my decision threshold, how does my true positive rate change versus my false positive rate?

The x-axis is false positive rate: FP / (FP + TN). Of the negative cases, how many did I wrongly flag?

The y-axis is true positive rate (aka recall): TP / (TP + FN). Of the positive cases, how many did I catch?

You move along the curve by changing your threshold. At one extreme, you predict "yes" for everything — high TPR, high FPR. At the other, you predict "no" for everything — low TPR, low FPR.

The area under the curve (AUC) gives you a single number: the probability that, if you pick a random positive case and a random negative case, your model ranks the positive higher. AUC ranges from 0 to 1. Higher is better. 0.5 means random guessing.

ROC curves are great for understanding model behavior across thresholds, but they assume all false positives and false negatives have equal cost, which is rarely true.

Precision-Recall Curves: When ROC Isn't Enough

Precision-recall (PR) curves show precision on the y-axis and recall on the x-axis. They're more useful than ROC curves when your classes are imbalanced because they focus on the positive class.

In fraud detection (99% legitimate transactions), a PR curve tells you the real story: what's my precision if I want 90% recall? In an ROC curve, that same imbalance gets washed out because the false positive rate is measured against a huge class.

Use PR curves when one class is rare and matters more.

Threshold Optimization: Making Real-World Tradeoffs

Your model outputs probabilities between 0 and 1. By default, you predict "positive" if probability > 0.5. But 0.5 is arbitrary. It's often the wrong threshold for your problem.

If your false positives are cheap and false negatives are expensive, lower the threshold to 0.3. You'll catch more positives (higher recall) but flag more false alarms (lower precision). If false positives are expensive, raise it to 0.7 — fewer alarms, but better precision.

Finding the optimal threshold means sweeping different values and picking the one that minimizes your real-world cost. That's where threshold analysis becomes critical.

Putting It Into Practice: A Walkthrough

Let's say you've built a churn prediction model. You have 1,000 historical customers: 150 churned, 850 stayed. You run your trained model and get a probability for each customer.

- Calculate the confusion matrix at your current threshold (0.5). How many did you catch? How many false alarms?

- Check your metrics: What's your precision? Recall? F1? Do they match your business goal?

- Plot your ROC curve. Does your model look better than random?

- Consider threshold changes. If retention is costly, raise the threshold. If churn is costly, lower it.

- Check calibration. If your model says "70% probability," is it actually 70% in reality? Or is it overconfident?

This is evaluation. Not a number, but a process of understanding your model's strengths and failures.

Try It Yourself

The easiest way to experiment with these metrics is to try them on your own data. EvalBench is a free tool that runs entirely in your browser — upload a CSV with your predictions and ground truth, and you'll get all of these metrics, curves, and visualizations instantly. No signup, no data upload to the cloud. Everything stays on your machine.

Grab a prediction file you've built and spend 10 minutes playing with thresholds, reading the confusion matrix, and watching the curves shift. That hands-on understanding is worth more than any explanation.

Ready to evaluate your model? Try EvalBench free