| Summary: In LLM inference on modern GPUs (like the NVIDIA H100), the bottleneck is memory bandwidth, not computational speed. The time it takes to move model weights from the GPU's slow main memory (HBM) to its processing cores limits how fast tokens can be generated. **The Solution: Unweight** Cloudflare developed Unweight, a **lossless** compression system that shrinks model weights by 15–22% (saving ~3 GB of VRAM on an 8B parameter model) while preserving bit-exact outputs, all without needing specialized hardware. **How It Works** * **Exponent Compression:** Standard model weights are stored as 16-bit "brain floats" (BF16), which consist of a sign, mantissa, and exponent. While the sign and mantissa are effectively random, the exponent is highly predictable. Over 99% of weights in a typical layer use one of just 16 exponent values. Unweight uses Huffman coding to compress just the exponent byte of these weights, leaving the rest untouched. * **On-Chip Decompression:** Traditional decompression writes reconstructed data back to slow main memory, defeating the bandwidth savings. Unweight instead decompresses the weights directly inside the GPU's ultra-fast shared memory, feeding the data straight into the tensor processing cores. * **Dynamic Execution:** There is no single best way to decompress weights during inference. Depending on the workload, Unweight's autotuner dynamically selects between four different execution pipelines—ranging from full decompression to direct processing of compressed indices—optimizing for specific matrix shapes and batch sizes. Ultimately, Unweight allows providers to fit more models onto a single GPU, reducing inference costs and increasing overall network efficiency. Which could mean also better local? [link] [comments] |

Unweight: how we compressed an LLM 22% without sacrificing quality

Reddit r/LocalLLaMA / 4/19/2026

💬 OpinionDeveloper Stack & InfrastructureTools & Practical UsageModels & Research

Key Points

- Cloudflare’s Unweight tackles the main bottleneck in LLM inference on modern GPUs: memory bandwidth limitations when moving model weights from HBM to compute cores.

- Unweight is a lossless compression approach that reduces model weight size by about 15–22% (saving roughly 3GB VRAM on an 8B model) while preserving bit-exact outputs.

- It compresses only the exponent portion of BF16 “brain float” weights using Huffman coding, relying on the fact that exponents are highly predictable and limited to a small set of values.

- To avoid losing the bandwidth gains, Unweight performs on-chip decompression inside the GPU’s fast shared memory and uses an autotuner to select among multiple execution pipelines based on workload shapes and batch sizes.

- By fitting more models onto a single GPU, Unweight can lower inference costs and improve overall network efficiency, potentially benefiting local deployment as well.

Related Articles

Black Hat USA

AI Business

Black Hat Asia

AI Business

How to Debug AI-Generated Code: A Systematic Approach

Dev.to

"Browser OS" implemented by Qwen 3.6 35B: The best result I ever got from a local model

Reddit r/LocalLLaMA

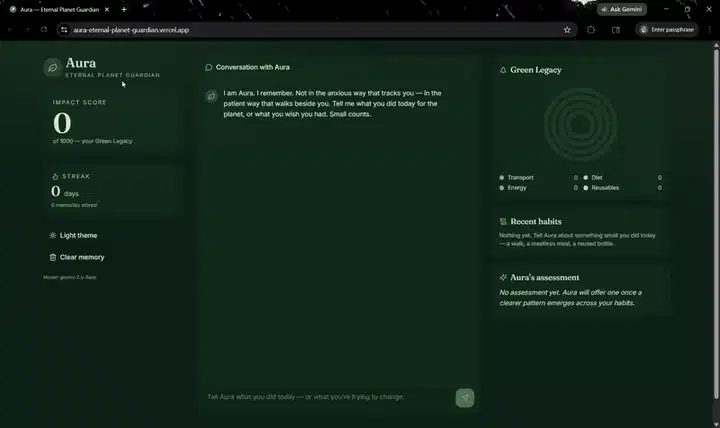

Every climate chatbot is amnesiac. So I built Aura — a stateful climate coach on Backboard + Gemini

Dev.to