Hybrid JIT-CUDA Graph Optimization for Low-Latency Large Language Model Inference

arXiv cs.LG / 4/28/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- The paper proposes a hybrid inference runtime that combines Just-In-Time (JIT) compilation with CUDA Graph execution to lower GPU kernel launch overhead in low-latency LLM serving.

- It splits transformer inference into static parts run via CUDA Graph replay and dynamic parts compiled on-the-fly with JIT kernels, keeping flexibility during autoregressive decoding.

- The framework supports asynchronous graph capture and reuse across decoding steps, aiming to reduce both latency and variability.

- Experiments on LLaMA-2 7B (single GPU, batch size 1) for 10–500 token prompts show up to a 66.0% reduction in Time-to-First-Token (TTFT) versus TensorRT-LLM, along with improved P99 latency.

- The authors conclude this hybrid approach is particularly effective for short-sequence, interactive workloads where latency sensitivity is critical.

Related Articles

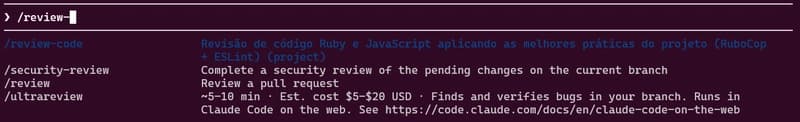

how to use skills from Claude Code A.K.A Claudinho.

Dev.to

Behind the Scenes of a Self-Evolving AI: The Architecture of Tian AI

Dev.to

Meet Tian AI: Your Completely Offline AI Assistant for Android

Dev.to

UK to develop AI hardware plan

Tech.eu

Copilot Cowork | The Control Plane for Long-Running AI Work | A Rahsi Framework™

Dev.to