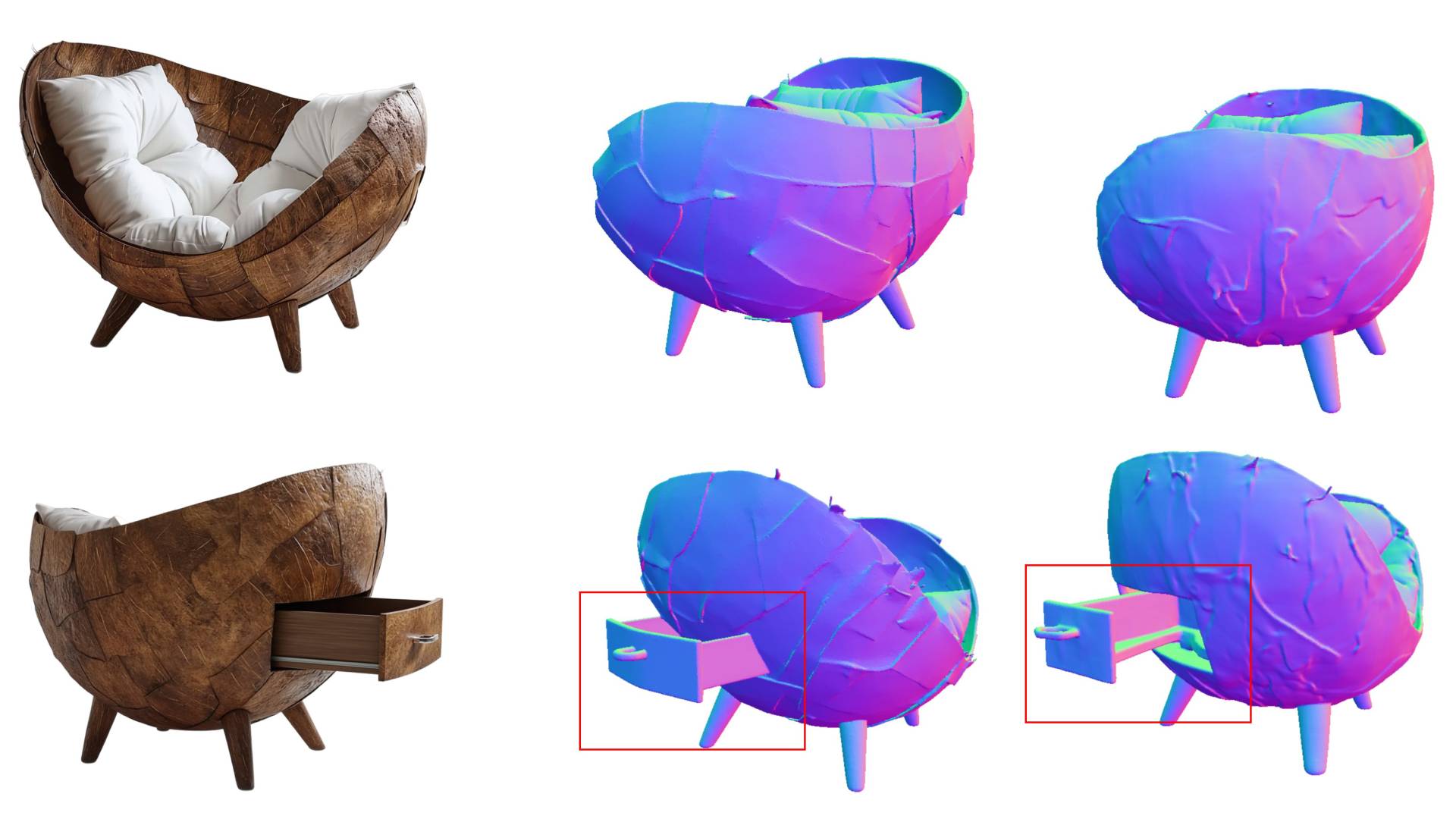

A research team taps into the world knowledge of large language models to control what appears on the back side of 3D objects using simple text commands. The approach tackles one of the biggest blind spots in single-image 3D generation.

The article Know3D lets users control the hidden back side of 3D objects with text prompts appeared first on The Decoder.