SegVGGT: Joint 3D Reconstruction and Instance Segmentation from Multi-View Images

arXiv cs.CV / 3/23/2026

📰 NewsModels & Research

Key Points

- SegVGGT introduces a unified end-to-end framework that jointly performs feed-forward 3D reconstruction and instance segmentation directly from multi-view RGB images.

- It leverages object queries that interact with multi-level geometric features to integrate instance identification into the visual geometry grounded transformer.

- A Frame-level Attention Distribution Alignment (FADA) strategy guides object queries to attend to instance-relevant frames during training, reducing attention dispersion without increasing inference cost.

- The approach achieves state-of-the-art performance on ScanNetv2 and ScanNet200 and demonstrates strong generalization on ScanNet++.

- By enabling RGB-only inputs for joint reconstruction and segmentation, SegVGGT reduces reliance on high-quality point clouds and decoupled processing pipelines.

Related Articles

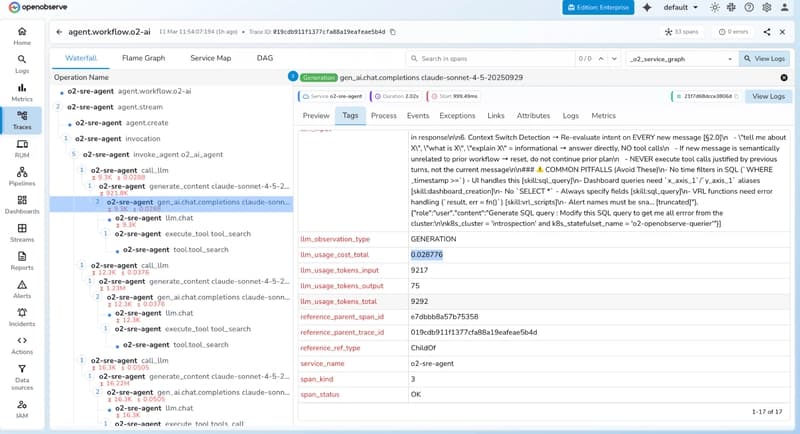

Best Open Source LLM Observability Tools in 2026: Complete Guide

Dev.to

This startup wants to change how mathematicians do math

MIT Technology Review

Karpathy's Autoresearch: Improving Agentic Coding Skills

Dev.to

[D] Any other PhD students feel underprepared and that the bar is too low?

Reddit r/MachineLearning

Survey on Generative AI value and Adoption

Reddit r/artificial