Qwen3.5-27B on RTX 5090 served via vLLM @ 77 tps

Reddit r/LocalLLaMA / 4/21/2026

💬 OpinionDeveloper Stack & InfrastructureTools & Practical UsageModels & Research

Key Points

- A Reddit user reports successfully running Qwen3.5-27B on an RTX 5090 locally with vLLM, achieving very strong throughput (~77 tps) and supporting a 218k context window.

- They note that full 256k context window support was not achievable on vLLM 0.19 with their setup, while vLLM 0.17 worked but delivered lower tps due to fewer optimizations.

- The setup relies on specific guidance from a Hugging Face model card plus a critical vLLM patch to fix KV cache size calculations (ref. vLLM PR #36325).

- The provided vLLM serving configuration includes key performance/compatibility flags such as flashinfer attention backend, FP8 KV cache dtype, auto tool choice, prefix caching, and quantization via modelopt, and supports up to 2 concurrent sequences with expected per-session slowdown.

- The user also cautions that one tested model variant did not work well, recommending a particular Qwen3.5-27B Text NVFP4 MTP checkpoint that has the tradeoff of lacking image processing.

Continue reading this article on the original site.

Read original →Related Articles

Black Hat USA

AI Business

RFE‑Core2 — Current Understanding (June 9th 2026) [R]

Reddit r/MachineLearning

What Is Vibe Coding? Why Are Millions of Developers Using It?

Dev.to

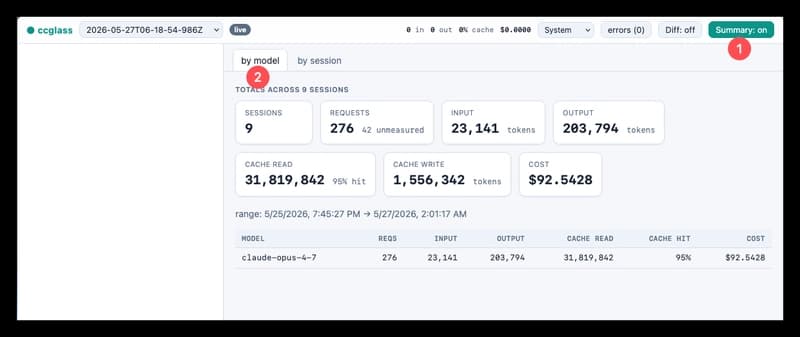

Make Your AI Coding Agent Transparent - See What It Actually Sends to the Model

Dev.to

Free AI Tools for Developers: No API Key, No Account, Just Results

Dev.to