Uncertainty-Aware Foundation Models for Clinical Data

arXiv cs.LG / 4/7/2026

💬 OpinionIdeas & Deep AnalysisModels & Research

Key Points

- The paper proposes an uncertainty-aware framework for clinical foundation models that treats each patient as a distribution over latent physiologic states rather than a single deterministic embedding.

- It learns set-valued representations and enforces consistency across incomplete, irregular, and modality-dependent clinical observations to capture what is reliably inferable while explicitly encoding epistemic uncertainty.

- The approach combines multimodal encoders with scalable self-supervised objectives, including reconstruction, contrastive alignment, and distributional regularization.

- Experiments across multiple clinical tasks show improved predictive performance, better robustness to missing data, and improved uncertainty calibration versus strong baseline methods.

- The authors argue that explicitly modeling what is not observed (uncertainty) is an important inductive bias for healthcare foundation models trained on heterogeneous clinical data.

Related Articles

Research with ChatGPT

Dev.to

Silicon Valley is quietly running on Chinese open source models and almost nobody is talking about it

Reddit r/LocalLLaMA

Why AI Product Quality Is Now an Evaluation Pipeline Problem, Not a Model Problem

Dev.to

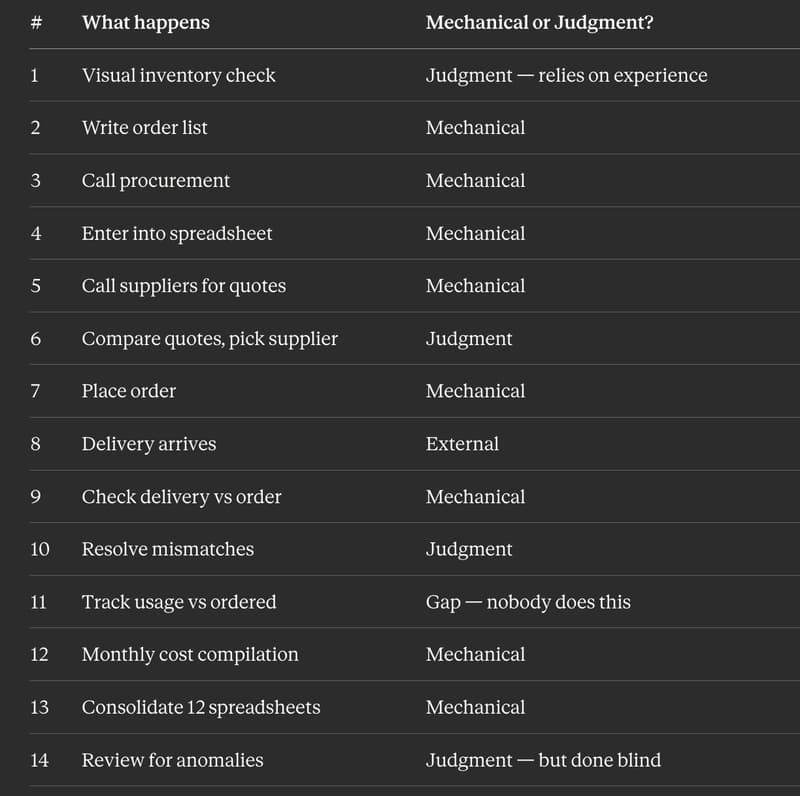

I Replaced 12 Kitchen Managers Guessing "How Much Chicken Do We Need" With 3 ML Models. Here's the Entire Architecture.

Dev.to

AI Model Router API - REST + MCP, Free Tier

Dev.to