I've been working on an alternative to attention-based sequence modeling that I'm calling Geometric Flow Networks (GFN). The core idea: instead of computing statistical correlations over a sequence, treat computation as a particle flowing through a geometric manifold where inputs act as perturbations that curve the trajectory without replacing the state*.* This gives three theoretical properties: O(1) state memory regardless of context length (no KV-cache), an inductive bias toward learning structural invariants rather than statistical patterns, and deterministic failure modes that are geometrically traceable rather than stochastic.

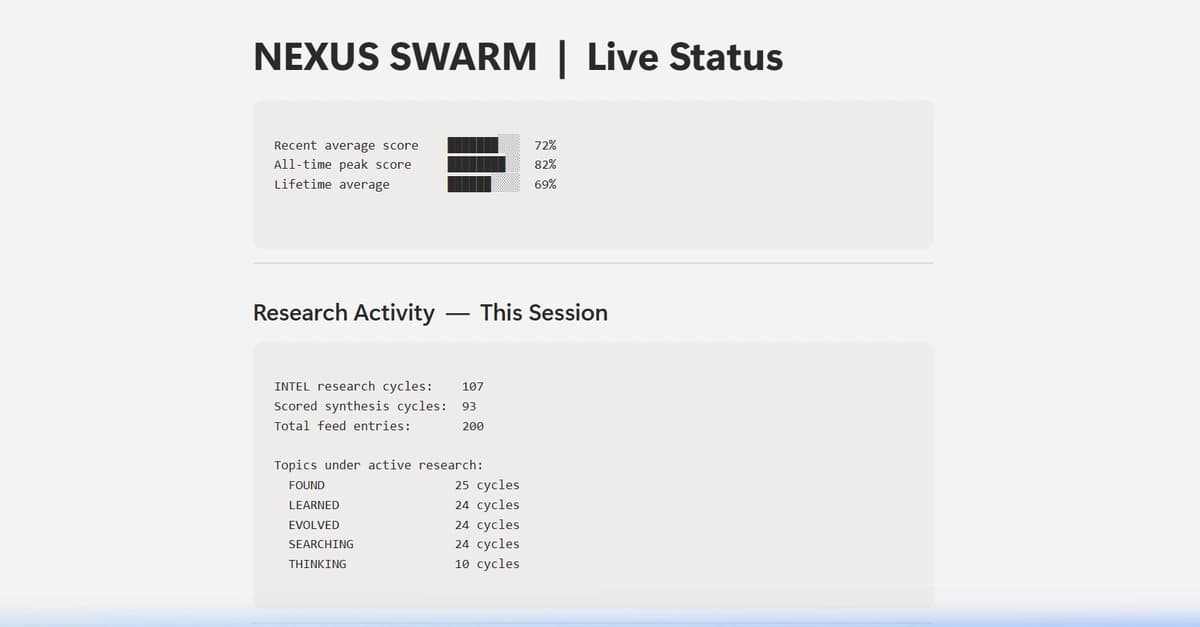

The result I can't explain away statistically:

A Geodesic State Space Model (G-SSM) with 3,164 parameters, trained on cumulative XOR sequences of length L=20, achieves 100% accuracy on sequences of length L=1,000,000 after fewer than 200 training steps. This isn't interpolation. The model learned the toroidal symmetry of parity conservation, not patterns.

Similarly, a Multi-Needle-in-a-Haystack model of 8,109 parameters, trained with K=2 needles at L=64, maintains 100% accuracy and 0% false positive rate up to L=32,000. With K=3 needles it fires on the second needle. A deterministic, traceable failure consistent with the geometry it learned, not a stochastic one. While not formally tested beyond L=32,000, the same toroidal invariant structure suggests theoretical extrapolation beyond L=1,000,000 as well.

The Inertial State Network (ISN) realization (a separate architecture under the same paradigm) achieves character-level perplexity of 2.48 on TinyShakespeare with 363k parameters, with inference state memory strictly constant at 2.00 KB regardless of context length. Honest caveat: the ISN was only trained at L=128, so it loses coherence on longer sequences, and it replaces dashes with periods or commas. These are known limitations tied to training scale, not the architecture itself.

All experiments run on a GTX 1650 (4GB VRAM). Code and models are public.

I'd like to engage on three fronts:

- Technical question: Is a physically grounded architecture that deforms its geometric space to learn structural invariants the way forward, or is statistical correlation fundamentally enough? (And to preempt the obvious comparison: G-SSM differs from Mamba/S4 and first-order SSMs in that G-SSM is second-order with symplectic integration, energy conservation, variable topology (toroidal, Euclidean, etc.), and low-rank Christoffel matrices — not just a learned gating function.)

- ArXiv endorsement in cs.LG. If any researcher in the field finds the Zenodo paper rigorous enough to vouch for it, please let me know.

- If you're interested in contributing to the research or experimenting with the architecture, all code is Apache 2.0 licensed. Feel free to reach out directly.

Paper: https://zenodo.org/records/19141133

Code: https://github.com/DepthMuun/gfn

Models: https://huggingface.co/DepthMuun

[link] [comments]