Stochastic KV Routing: Enabling Adaptive Depth-Wise Cache Sharing

Apple Machine Learning Journal / 5/5/2026

💬 OpinionDeveloper Stack & InfrastructureModels & Research

Key Points

- The paper addresses the high memory cost of KV caching for transformer language models and how it increases serving costs during autoregressive generation.

- It proposes “Stochastic KV Routing” to enable adaptive sharing of KV cache content across depth (layers), treating the depth dimension as an orthogonal optimization target.

- The method is designed to be robust by leveraging prior insights that a full KV cache for every layer can be redundant, but without requiring a rigid per-layer caching policy.

- Compared with approaches that primarily reduce KV caches along the temporal axis (e.g., compression and eviction), the work argues that depth-wise cache sharing can further reduce memory requirements while maintaining performance.

Serving transformer language models with high throughput requires caching Key-Values (KVs) to avoid redundant computation during autoregressive generation. The memory footprint of KV caching is significant and heavily impacts serving costs. This work proposes to lessen these memory requirements. While recent work has largely addressed KV cache reduction via compression and eviction along the temporal axis, we argue that the depth dimension offers an orthogonal and robust avenue for optimization. Although prior research suggests that a full cache for every layer is redundant, implementing…

Continue reading this article on the original site.

Read original →Related Articles

Transform Your Blurry Photos into HD Masterpieces, Instantly!

Dev.to

6 New Moats for AI Agent Infrastructure — Trust Score, Deployment, SLA, Identity, Compliance-as-Code

Dev.to

Google Home’s Gemini AI can handle more complicated requests

The Verge

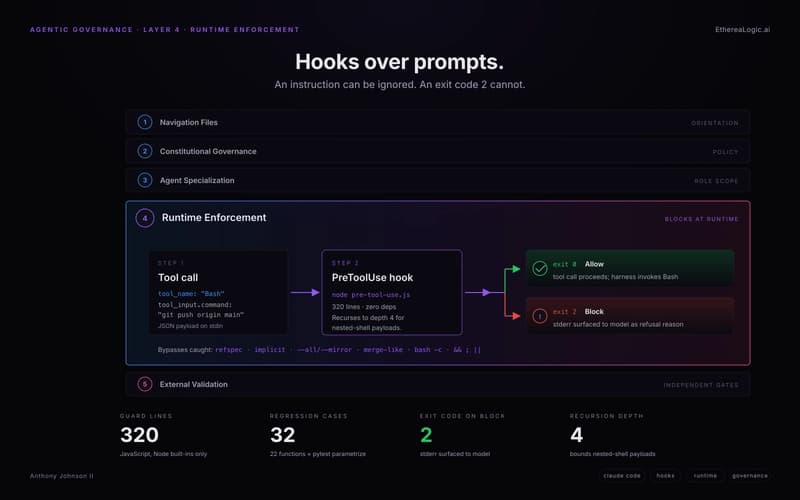

Exit Code 2: How Claude Hooks Turn Agentic Rules Into Runtime Barriers

Dev.to

Qiskit Backend Specifications for OpenQASM and OpenPulse Experiments

Dev.to