Federated Cross-Modal Retrieval with Missing Modalities via Semantic Routing and Adapter Personalization

arXiv cs.CV / 4/28/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- The paper introduces RCSR, a personalization-friendly federated learning framework for cross-modal retrieval that targets two key real-world challenges: non-IID client data and missing modalities.

- RCSR builds on a frozen CLIP backbone and uses lightweight shared adapters to transfer global cross-modal knowledge while optionally adding client-specific adapters for efficient local personalization.

- It improves unimodal clients’ alignment with global semantics through prototype anchoring, helping them better map into the shared cross-modal space.

- A server-side semantic router assigns aggregation weights based on retrieval consistency, aiming to reduce alignment drift caused by heterogeneous client updates.

- Experiments on MS-COCO, Flickr30K, and other benchmarks indicate RCSR boosts both global retrieval accuracy/training stability and client-level performance, particularly when clients have incomplete modalities.

Related Articles

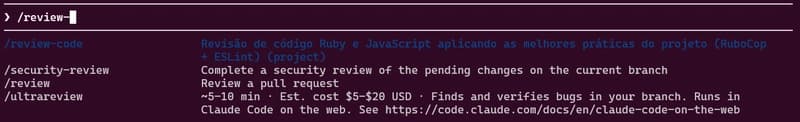

how to use skills from Claude Code A.K.A Claudinho.

Dev.to

Behind the Scenes of a Self-Evolving AI: The Architecture of Tian AI

Dev.to

Meet Tian AI: Your Completely Offline AI Assistant for Android

Dev.to

UK to develop AI hardware plan

Tech.eu

Copilot Cowork | The Control Plane for Long-Running AI Work | A Rahsi Framework™

Dev.to