ECG Biometrics with ArcFace-Inception: External Validation on MIMIC and HEEDB

arXiv cs.LG / 4/7/2026

💬 OpinionIdeas & Deep AnalysisModels & Research

Key Points

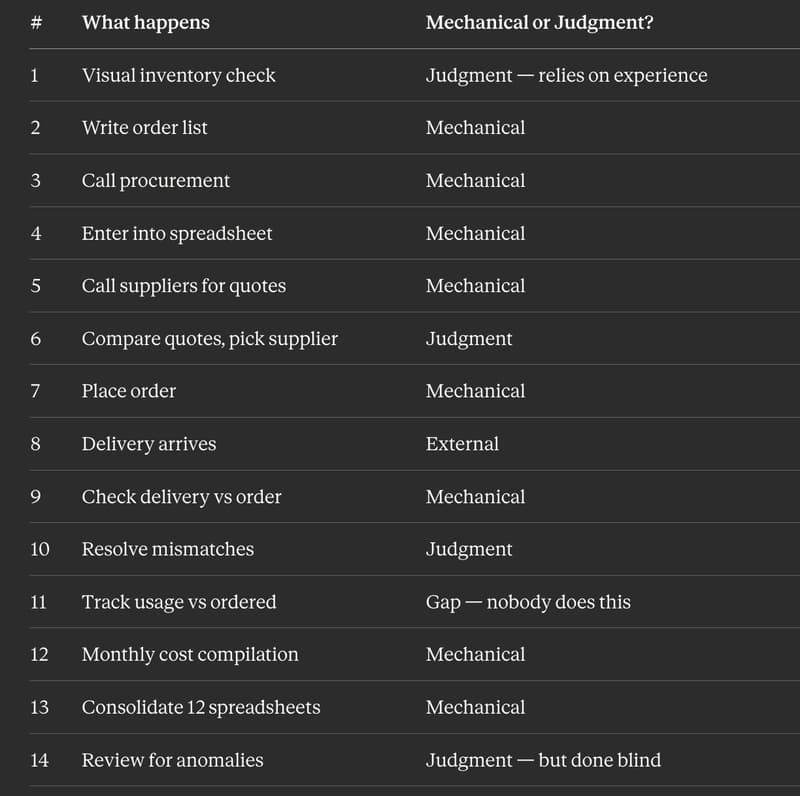

- The paper evaluates ECG biometrics using a 1D Inception-v1 model trained with ArcFace on a large internal clinical dataset (164,440 12-lead ECGs from 53,079 patients) and externally tests on MIMIC-IV-ECG and HEEDB.

- Using a unified closed-set leave-one-out protocol with Rank@K and TAR@FAR, the system shows strong identifiability under broadly comparable conditions, achieving Rank@1 of 0.9506 (ASUGI-DB), 0.8291 (MIMIC-GC), and 0.6884 (HEEDB-GC).

- Temporal stress experiments reveal performance degradation with increasing year gaps even at constant gallery size, with Rank@1 dropping (e.g., MIMIC: 0.7853→0.6433 over 1–5 years; HEEDB: 0.6864→0.5560).

- Gallery size and domain heterogeneity substantially affect operational quality: HEEDB scale tests show monotonic degradation as the gallery grows, with recovery when more examinations per patient are available.

- Post-hoc reranking improves retrieval on HEEDB-RR, where AS-norm raises Rank@1 to 0.8005 from a 0.7765 baseline, indicating that score processing can partially mitigate domain/scale effects.

Related Articles

Research with ChatGPT

Dev.to

Silicon Valley is quietly running on Chinese open source models and almost nobody is talking about it

Reddit r/LocalLLaMA

Why AI Product Quality Is Now an Evaluation Pipeline Problem, Not a Model Problem

Dev.to

I Replaced 12 Kitchen Managers Guessing "How Much Chicken Do We Need" With 3 ML Models. Here's the Entire Architecture.

Dev.to

AI Model Router API - REST + MCP, Free Tier

Dev.to