Building Trust in the Skies: A Knowledge-Grounded LLM-based Framework for Aviation Safety

arXiv cs.AI / 4/16/2026

💬 OpinionIdeas & Deep AnalysisModels & Research

Key Points

- The paper argues that using LLMs for aviation safety decision-making requires stronger trust mechanisms because standalone LLM outputs can be inaccurate, unverifiable, or hallucinatory.

- It proposes an end-to-end framework that combines LLMs with Knowledge Graphs to improve reliability for safety-critical analytics.

- In the first phase, LLMs are used to automatically build and dynamically update an Aviation Safety Knowledge Graph (ASKG) from multimodal sources.

- In the second phase, the framework applies a Retrieval-Augmented Generation (RAG) setup over the curated KG to ground, validate, and explain the LLM’s responses.

- Experimental results indicate better accuracy and traceability than LLM-only methods, with improved support for complex queries and reduced hallucination, while future work targets relationship extraction and hybrid retrieval.

Related Articles

The AI Hype Cycle Is Lying to You About What to Learn

Dev.to

Big Tech firms are accelerating AI investments and integration, while regulators and companies focus on safety and responsible adoption.

Dev.to

Inside NVIDIA’s $2B Marvell Deal: What NVLink Fusion Means for AI Ethernet Fabrics

Dev.to

Automating Your Literature Review: From PDFs to Data with AI

Dev.to

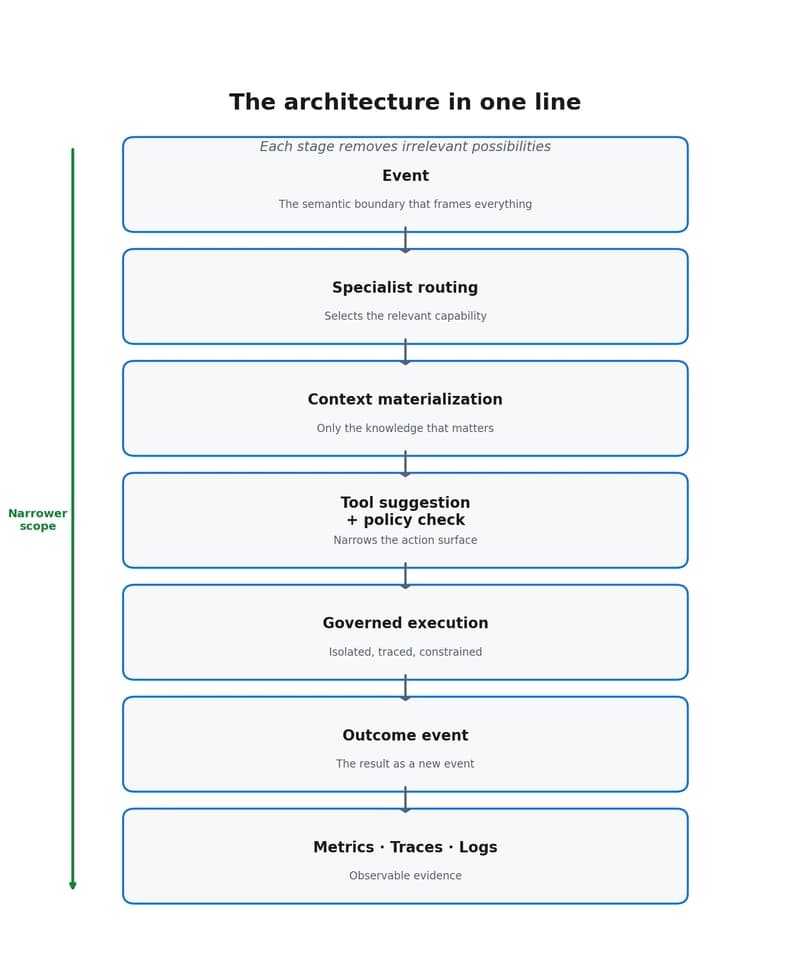

Why event-driven agents reduce scope, cost, and decision dispersion

Dev.to