Sentiment Classification of Gaza War Headlines: A Comparative Analysis of Large Language Models and Arabic Fine-Tuned BERT Models

arXiv cs.CL / 4/13/2026

💬 OpinionIdeas & Deep AnalysisModels & Research

Key Points

- The paper analyzes how different AI architectures interpret sentiment in conflict-related Arabic news headlines using the 2023 Gaza War as a case study.

- It compares three large language models with six fine-tuned Arabic BERT variants on a corpus of 10,990 headlines, using information-theoretic and distributional metrics rather than a single accuracy benchmark.

- Fine-tuned BERT models (especially MARBERT) show a strong tendency to classify sentiment as neutral, while LLMs consistently amplify negative sentiment.

- The study finds that LLaMA-3.1-8B exhibits a near-total collapse toward negativity in the evaluated setting.

- Frame-conditioned tests indicate GPT-4.1 can adjust sentiment judgments based on narrative frames (humanitarian/legal/security), whereas other LLMs show limited contextual modulation, implying model choice acts as an “interpretive lens.”

Related Articles

ChatGPT for Nurses: Prompts That Help You Document, Communicate, and Study

Dev.to

I Added a Stopwatch to My AI in 1 LOC Using the Livingrimoire While Corporations Need a Year

Dev.to

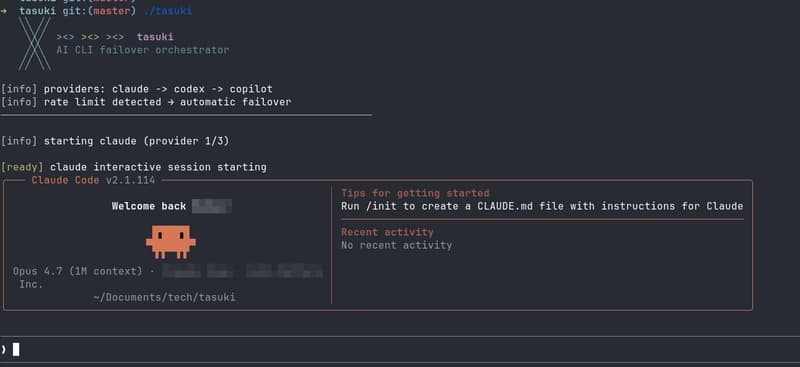

Built tasuki — an AI CLI Orchestrator that Seamlessly Hands Off Between Tools

Dev.to

I built a GNOME extension for Codex with local/remote history, live filters, Markdown export, and a read-only MCP server

Reddit r/artificial

I Built an Open‑ Source OS for AI Agents – And It’s Ready for You

Dev.to