Act2See: Emergent Active Visual Perception for Video Reasoning

arXiv cs.CV / 5/5/2026

📰 NewsIdeas & Deep AnalysisModels & Research

Key Points

- Vision-Language Models (VLMs) often use only static initial frames for video reasoning, which limits their ability to incorporate dynamic evidence as reasoning progresses.

- The proposed Act-to-See (Act2See) framework lets VLMs actively interleave video frames into text-based Chain-of-Thought (CoT), improving visual synthesis and enabling hypothetical/counterfactual reasoning.

- Act2See is trained via supervised fine-tuning (SFT) on high-quality reasoning-trace data generated by a frontier VLM, where the traces include verified active frame retrieval or synthesis steps.

- At inference time, the model dynamically decides when to retrieve existing frames or generate/synthesize new ones to obtain the needed visual evidence.

- Experiments report new state-of-the-art performance on VideoEspresso and ViTIB, and improvements over comparable or larger models on several other video reasoning benchmarks.

Related Articles

The 55.6% problem: why frontier LLMs fail at embedded code

Dev.to

Four CVEs in a week, all the same shape: when agents execute LLM-generated code

Dev.to

Healthcare AI Is Absorbing Institutional Knowledge It Can't Actually Hold

Reddit r/artificial

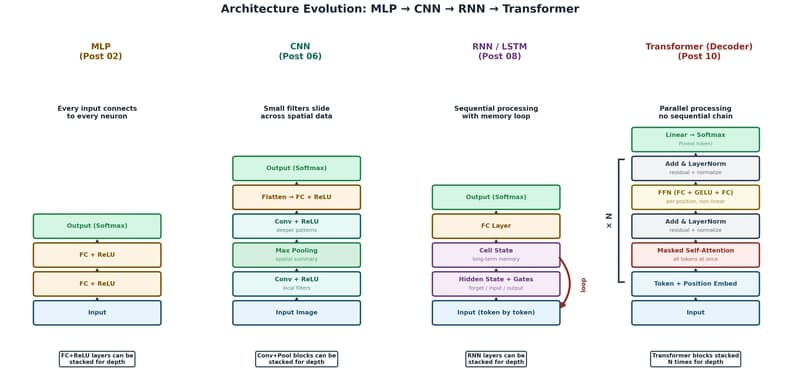

The Transformer: The Architecture Behind Modern AI

Dev.to

Foundational Models Defining a New Era in Vision: A Survey and Outlook

Dev.to