Prompt-Guided Image Editing with Masked Logit Nudging in Visual Autoregressive Models

arXiv cs.CV / 4/17/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

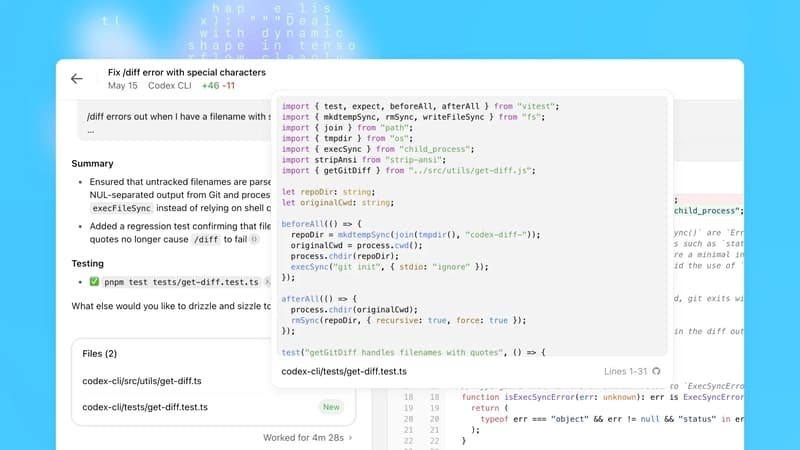

- The paper tackles prompt-guided image editing in visual autoregressive models by modifying a source image to match a target text prompt while preserving regions unrelated to the edit.

- It introduces Masked Logit Nudging, which converts fixed source token encodings into logits and nudges the model’s predictions toward targets along a semantic trajectory derived from source-target prompts.

- Spatial edits are constrained using masks generated via a dedicated masking scheme based on cross-attention differences between the source and edited prompts.

- A refinement step is added to reduce quantization errors and improve reconstruction quality.

- Experiments report state-of-the-art performance on the PIE benchmark at 512px and 1024px, strong reconstruction quality, and faster execution with results comparable to or better than diffusion models; code is released on GitHub.

💡 Insights using this article

This article is featured in our daily AI news digest — key takeaways and action items at a glance.

Related Articles

langchain-anthropic==1.4.1

LangChain Releases

Stop burning tokens on DOM noise: a Playwright MCP optimizer layer

Dev.to

Talk to Your Favorite Game Characters! Mantella Brings AI to Skyrim and Fallout 4 NPCs

Dev.to

OpenAI Codex Update Adds macOS Agent, Browser, Memory; 3M Weekly Users

Dev.to

How Data Science Is Used to Predict User BeReducing Human Error in Compliance With AI Technology havior

Dev.to