Blog post:

https://blog.google/innovation-and-ai/technology/developers-tools/multi-token-prediction-gemma-4/

MTP draft models:

https://huggingface.co/google/gemma-4-31B-it-assistant

https://huggingface.co/google/gemma-4-26B-A4B-it-assistant

https://huggingface.co/google/gemma-4-E4B-it-assistant

https://huggingface.co/google/gemma-4-E2B-it-assistant

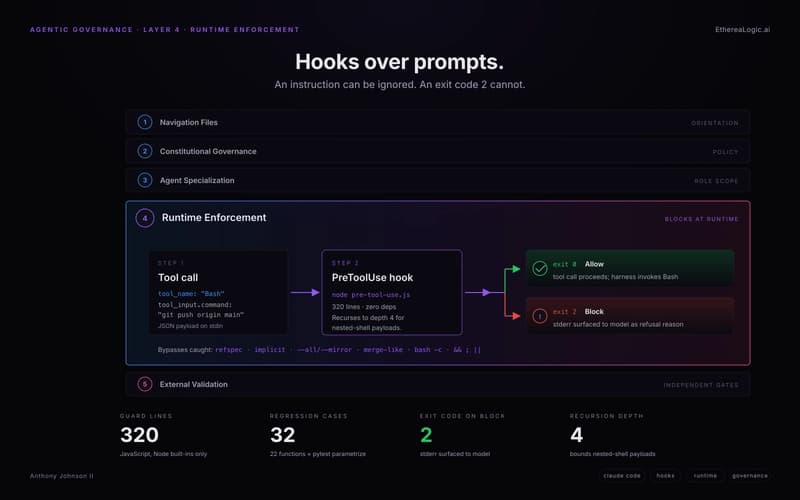

This model card is for the Multi-Token Prediction (MTP) drafters for the Gemma 4 models. MTP is implemented by extending the base model with a smaller, faster draft model. When used in a Speculative Decoding pipeline, the draft model predicts several tokens ahead, which the target model then verifies in parallel. This results in significant decoding speedups (up to 2x) while guaranteeing the exact same quality as standard generation, making these checkpoints perfect for low-latency and on-device applications.

[link] [comments]