CoAction: Cross-task Correlation-aware Pareto Set Learning

arXiv cs.LG / 5/5/2026

📰 NewsIdeas & Deep AnalysisModels & Research

Key Points

- Pareto set learning (PSL) trains neural networks to map preference vectors to Pareto-optimal solutions, but prior work often solves one multi-objective problem per time, limiting scalability to multi-task settings.

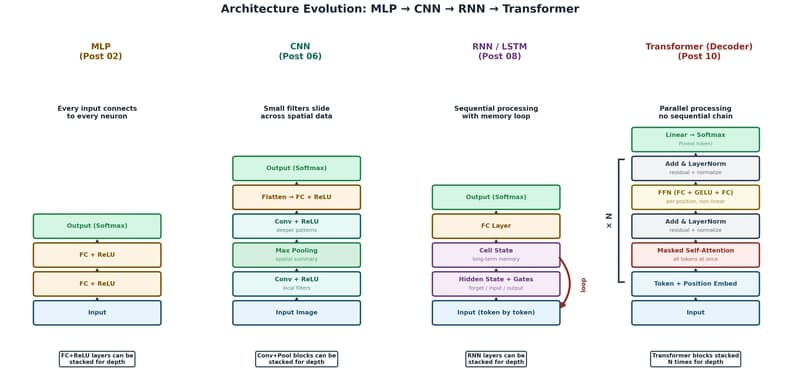

- The paper introduces CoAction, a cross-task correlation-aware PSL framework that jointly learns multiple tasks by using a task-aware Transformer architecture.

- CoAction distinguishes tasks via task-specific embedding vectors while still enabling knowledge sharing and modeling correlations between tasks.

- The Transformer encoder backbone leverages self-attention to capture complex dependencies across tasks, improving overall multi-task optimization quality.

- Experiments on multitask test suites (benchmarks and real-world applications) show competitive results across key metrics such as Hypervolume, Range, and Sparsity.

Related Articles

The 55.6% problem: why frontier LLMs fail at embedded code

Dev.to

Four CVEs in a week, all the same shape: when agents execute LLM-generated code

Dev.to

Healthcare AI Is Absorbing Institutional Knowledge It Can't Actually Hold

Reddit r/artificial

The Transformer: The Architecture Behind Modern AI

Dev.to

Foundational Models Defining a New Era in Vision: A Survey and Outlook

Dev.to