Text Summarization With Graph Attention Networks

arXiv cs.CL / 4/7/2026

📰 NewsIdeas & Deep AnalysisModels & Research

Key Points

- The paper explores using graph information—specifically Rhetorical Structure Theory (RST) and coreference graphs—to improve text summarization performance over baseline models.

- A Graph Attention Network approach for incorporating the graph information did not improve results, leading the authors to pivot to a simpler Multi-layer Perceptron (MLP) architecture that did improve performance on CNN/DM.

- The authors also annotated the XSum dataset with RST graph information, creating a new benchmark intended to support future research on graph-based summarization.

- The XSum graph-annotated dataset introduced notable challenges that highlight both strengths and limitations of their models and graph-based methods in general.

Related Articles

Research with ChatGPT

Dev.to

Silicon Valley is quietly running on Chinese open source models and almost nobody is talking about it

Reddit r/LocalLLaMA

Why AI Product Quality Is Now an Evaluation Pipeline Problem, Not a Model Problem

Dev.to

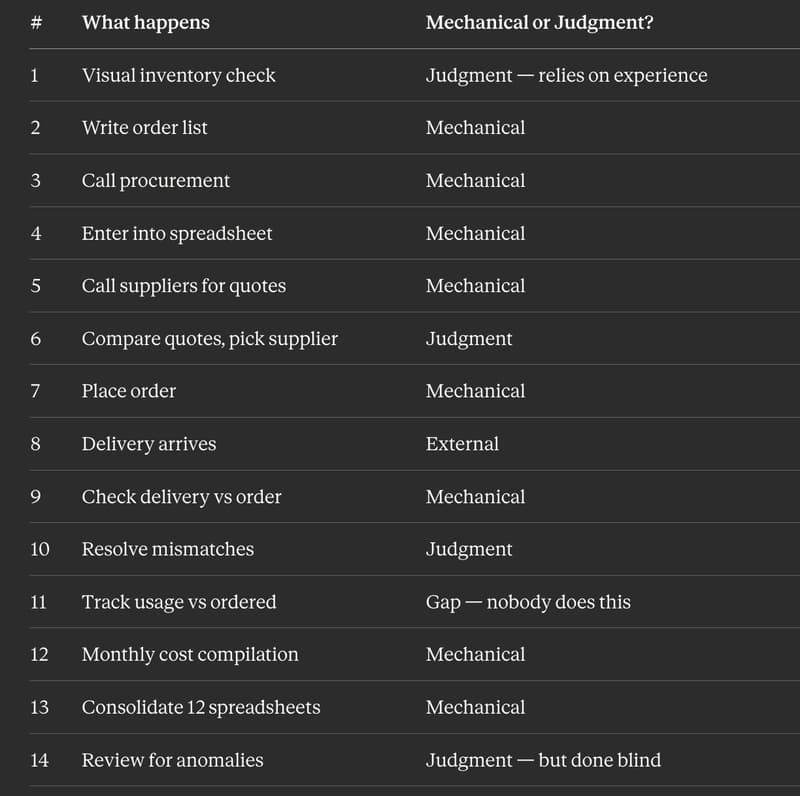

I Replaced 12 Kitchen Managers Guessing "How Much Chicken Do We Need" With 3 ML Models. Here's the Entire Architecture.

Dev.to

AI Model Router API - REST + MCP, Free Tier

Dev.to