Cross-Family Speculative Decoding for Polish Language Models on Apple~Silicon: An Empirical Evaluation of Bielik~11B with UAG-Extended MLX-LM

arXiv cs.CL / 4/21/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- The paper extends the MLX-LM framework with Universal Assisted Generation (UAG) to enable speculative decoding across mismatched tokenizers on Apple Silicon.

- Using Bielik 11B-Instruct (Mistral-based) as the target and three draft models (including Bielik 1.5B, Qwen2.5-1.5B, and Llama 3.2-1B), the study evaluates k={2,4,6} draft lengths on Polish datasets (Wikipedia, pl_alpaca, synthetic).

- Context-aware token translation improves acceptance rates across configurations, but the Polish-specialized Bielik 1.5B draft shows lower acceptance than general-purpose Qwen2.5 and Llama 3.2 drafts.

- Throughput gains on Apple Silicon are content-dependent: up to ~1.7x speedup for structured text, with degraded performance for varied instructions, and theoretical amortization fails because both models become memory-bandwidth limited.

- The authors provide a hardware-aware speedup formula and conditions for when cross-family speculative decoding is likely to work effectively on unified memory systems.

Related Articles

Agent Package Manager (APM): A DevOps Guide to Reproducible AI Agents

Dev.to

3 Things I Learned Benchmarking Claude, GPT-4o, and Gemini on Real Dev Work

Dev.to

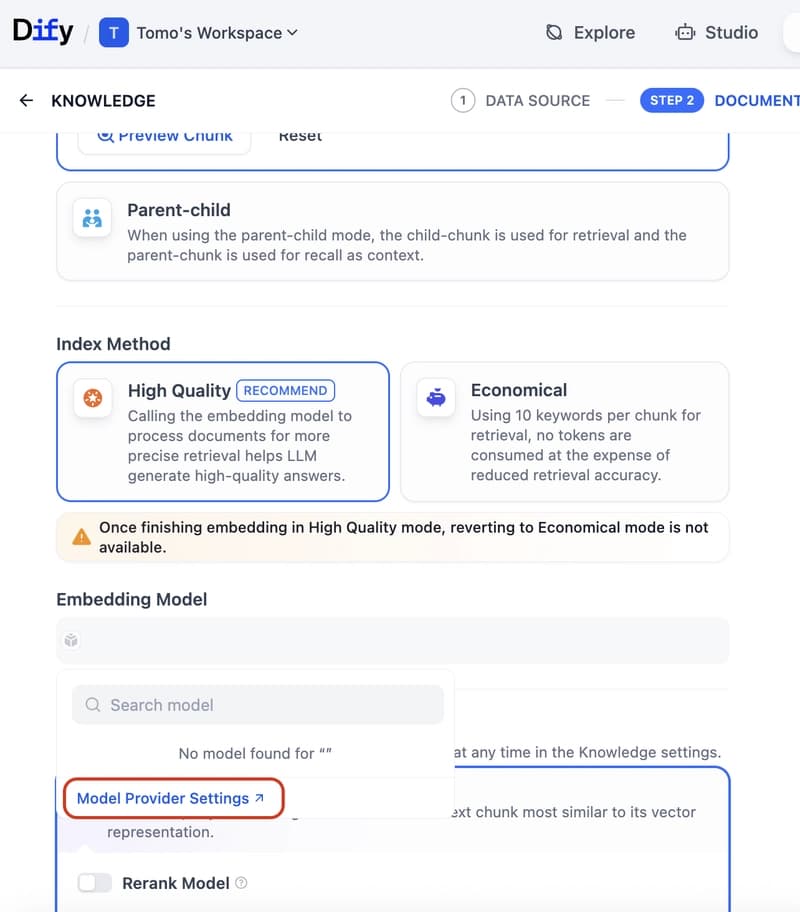

Dify Now Supports IRIS as a Vector Store — Setup Guide

Dev.to

How to build a Claude chatbot with streaming responses in under 50 lines of Node.js

Dev.to

Open Source Contributors Needed for Skillware & Rooms (AI/ML/Python)

Dev.to