Neural Network Optimization Reimagined: Decoupled Techniques for Scratch and Fine-Tuning

arXiv cs.CV / 4/28/2026

📰 NewsDeveloper Stack & InfrastructureIdeas & Deep AnalysisModels & Research

Key Points

- The paper introduces DualOpt, an optimizer framework that separates optimization strategies for training from scratch versus fine-tuning pre-trained neural networks.

- For scratch training, DualOpt adds real-time, layer-wise weight decay to improve convergence and generalization in a way that matches layer update behavior and network architecture.

- For fine-tuning, it couples an optimizer-integrated weight rollback term into every update step to keep weight distributions consistent between upstream and downstream models, reducing knowledge forgetting.

- It further extends layer-wise weight decay to dynamically adjust rollback levels across layers based on downstream task needs, aiming for better adaptation.

- Experiments on image classification, object detection, semantic segmentation, and instance segmentation show DualOpt’s broad applicability and state-of-the-art results, with code provided on GitHub.

Related Articles

Write a 1,200-word blog post: "What is Generative Engine Optimization (GEO) and why SEO teams need it now"

Dev.to

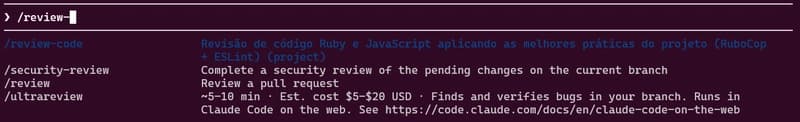

how to use skills from Claude Code A.K.A Claudinho.

Dev.to

Indian Developers: How to Build AI Side Income with $0 Capital in 2026

Dev.to

Most People Use AI Like Google. That's Why It Sucks.

Dev.to

Behind the Scenes of a Self-Evolving AI: The Architecture of Tian AI

Dev.to