| Evaluated Qwen 3.6 27B across BF16, Q4_K_M, and Q8_0 GGUF quant variants with llama-cpp-python using Neo AI Engineer. Benchmarks used:

Total samples:

Results: BF16

Q4_K_M

Q8_0

What stood out: Q4_K_M looks like the best practical variant here. It keeps BFCL almost identical to BF16, drops about 5.5 points on HumanEval, and is still only 4 points behind BF16 on HellaSwag. The tradeoff is pretty good:

Q8_0 was a bit underwhelming in this run. It improved HumanEval over Q4_K_M by ~1.8 points, but used 42 GB RAM vs 28 GB and was slower. It also scored lower than Q4_K_M on HellaSwag in this eval. For local/CPU deployment, I would probably pick Q4_K_M unless the workload is heavily code-generation focused. For maximum quality, BF16 still wins. Evaluation setup:

This evaluation was done using Neo AI Engineer, which built the GGUF eval setup, handled checkpointed runs, and consolidated the benchmark results. I manually reviewed the outcome as well. Complete case study with benchmarking results, approach and code snippets in mentioned in the comments below 👇 [link] [comments] |

Qwen 3.6 27B BF16 vs Q4_K_M vs Q8_0 GGUF evaluation

Reddit r/LocalLLaMA / 4/28/2026

💬 OpinionDeveloper Stack & InfrastructureTools & Practical UsageModels & Research

Key Points

- The article compares Qwen 3.6 27B performance across BF16 and two GGUF quantized formats (Q4_K_M and Q8_0) using llama-cpp-python on Neo AI Engineer.

- Across HumanEval, HellaSwag, and BFCL (function calling), BF16 achieves the best overall accuracy, but Q4_K_M delivers a close practical alternative with much lower resource usage.

- Q4_K_M shows nearly identical BFCL scores to BF16 (63.0–63.25%) while reducing peak RAM from 54 GB (BF16) to 28 GB and shrinking the model file to 16.8 GB.

- Q8_0 performs less favorably in this run: it is slower and uses more peak RAM than Q4_K_M, with lower HellaSwag results despite a slight improvement in HumanEval.

- For local/CPU deployments, the piece recommends Q4_K_M by default unless the workload is heavily code-generation focused, while BF16 remains the choice for maximum quality.

Related Articles

Black Hat USA

AI Business

Write a 1,200-word blog post: "What is Generative Engine Optimization (GEO) and why SEO teams need it now"

Dev.to

Remove Background from Image Free (No Signup): The Practical Guide

Dev.to

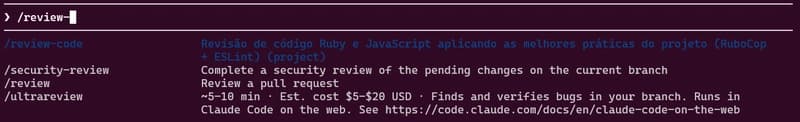

how to use skills from Claude Code A.K.A Claudinho.

Dev.to

Indian Developers: How to Build AI Side Income with $0 Capital in 2026

Dev.to