Information-Theoretic Limits of Safety Verification for Self-Improving Systems

arXiv stat.ML / 3/31/2026

💬 OpinionIdeas & Deep AnalysisModels & Research

Key Points

- The paper formalizes whether a “safety gate” can allow unbounded beneficial self-modification while keeping bounded cumulative risk, using conditions like bounded risk (∑δ_n < ∞) and unbounded utility (∑TPR_n = ∞).

- It proves an incompatibility result: for power-law risk schedules with δ_n = O(n^{-p}) (p > 1), classifier-based gating cannot simultaneously achieve bounded cumulative risk and unbounded utility, yielding TPR_n that is summable and thus cannot diverge.

- The authors derive a universal finite-horizon ceiling showing that, even with any summable risk schedule, the maximum achievable classifier-based utility grows only subpolynomially (around exp(O(sqrt(log N))))—illustrated by a stark gap between classifier- vs verifier-based limits at N = 10^6.

- A separate “verification escape” theorem shows that using a Lipschitz-ball verifier can achieve δ = 0 with TPR > 0, avoiding the classifier-impossibility, with formal bounds applied to pre-LayerNorm transformers under LoRA.

- Empirically, they validate the verifier escape on GPT-2 using LoRA (d_LoRA = 147,456), reporting conditional δ = 0 with TPR = 0.352, with broader experiments deferred to a companion work.

Related Articles

How to Verify Information Online and Avoid Fake Content

Dev.to

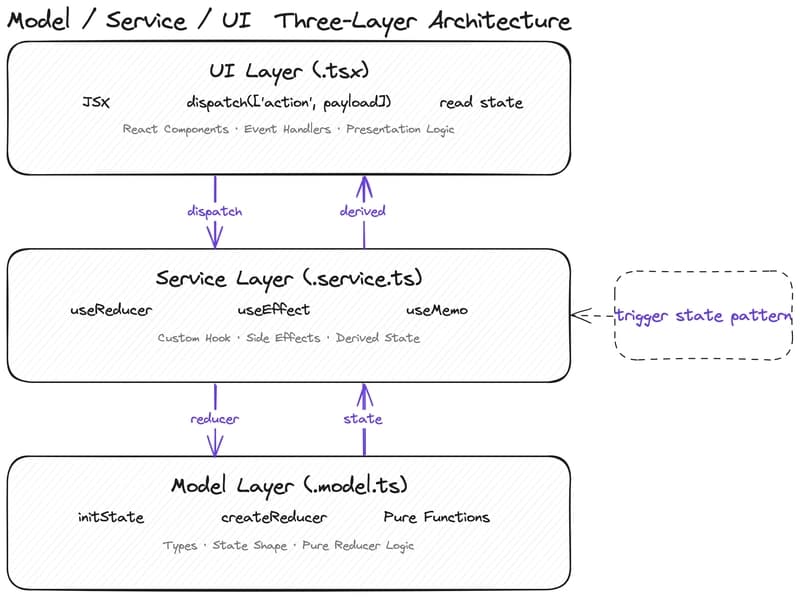

Why Your State Management Is Slowing Down AI-Assisted Development

Dev.to

From Black Box to Trusted Tool: Quality Control for AI in Literature Reviews

Dev.to

Fake users generated by AI can't simulate humans — review of 182 research papers. Your thoughts?

Reddit r/artificial

[D] ICPR Decision Discussion

Reddit r/MachineLearning