Modeling Biomechanical Constraint Violations for Language-Agnostic Lip-Sync Deepfake Detection

arXiv cs.CV / 4/21/2026

📰 NewsDeveloper Stack & InfrastructureSignals & Early TrendsModels & Research

Key Points

- The paper argues that existing lip-sync deepfake detectors overfit to pixel-level or audio-visual cues that don’t generalize across languages.

- It proposes a language-agnostic detection signal based on a biomechanical violation: generative models fail to enforce natural orofacial articulation constraints, leading to elevated temporal lip variance.

- The authors define this discrepancy as “temporal lip jitter,” showing it remains consistent across factors like language, ethnicity, and recording conditions.

- They introduce BioLip, a lightweight framework that uses 64 perioral landmark coordinates from MediaPipe rather than raw pixels, to operationalize the proposed principle.

- The approach is positioned as more universal than artifact-based methods by tying detection to physical plausibility rather than data-dependent patterns.

Related Articles

Capsule Security Emerges From Stealth With $7 Million in Funding

Dev.to

Rethinking Coding Education for the AI Era

Dev.to

Agent Package Manager (APM): A DevOps Guide to Reproducible AI Agents

Dev.to

3 Things I Learned Benchmarking Claude, GPT-4o, and Gemini on Real Dev Work

Dev.to

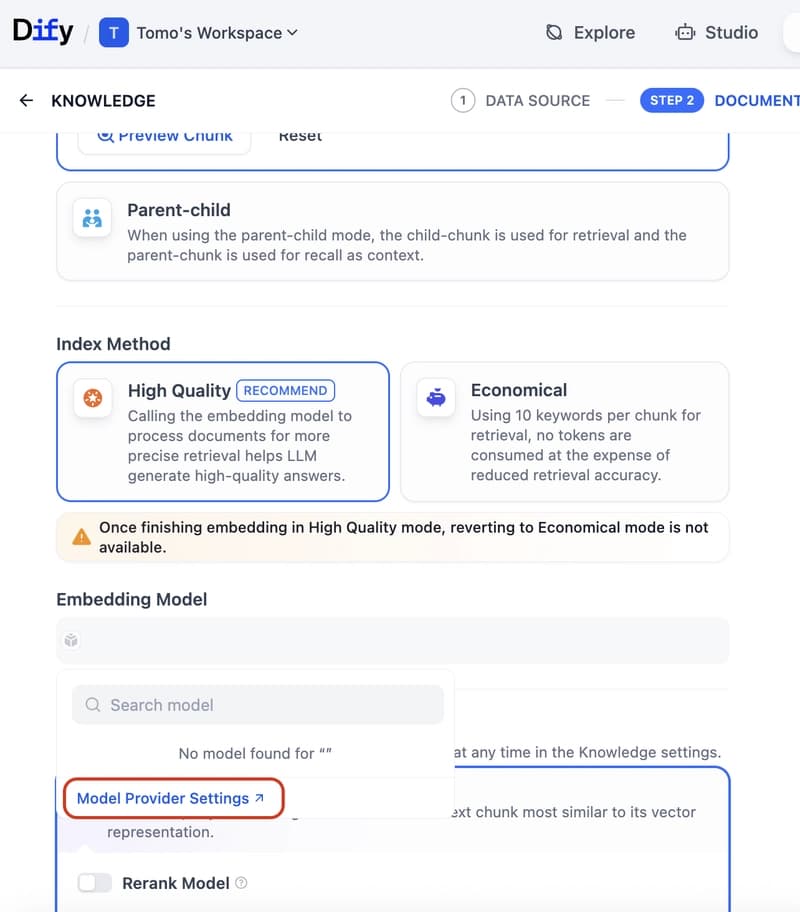

Dify Now Supports IRIS as a Vector Store — Setup Guide

Dev.to