Align Documents to Questions: Question-Oriented Document Rewriting for Retrieval-Augmented Generation

arXiv cs.CL / 4/21/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- The paper introduces QREAM, a style-controlled rewriting method that reformats retrieved documents into a question-oriented style to reduce LLM hallucination caused by presentation bias in RAG.

- QREAM is implemented in two stages—QREAM-ICL for iterative, style-guided rewriting using in-context learning, and QREAM-FT as a lightweight student model distilled from the ICL outputs.

- QREAM-FT uses dual-criteria rejection sampling to keep only candidate rewrites that satisfy both answer correctness and factual consistency, improving supervision quality.

- The approach is designed to be plug-and-play in existing RAG pipelines and experiments show up to an 8% relative improvement with essentially no added latency.

- Overall, the work argues that retrieved evidence quality is constrained not only by content but also by how it is presented to the LLM, and it addresses this bottleneck via aligned formatting.

Related Articles

Add cryptographic authorization to AI agents in 5 minutes

Dev.to

Building a website with Replit and Vercel

Dev.to

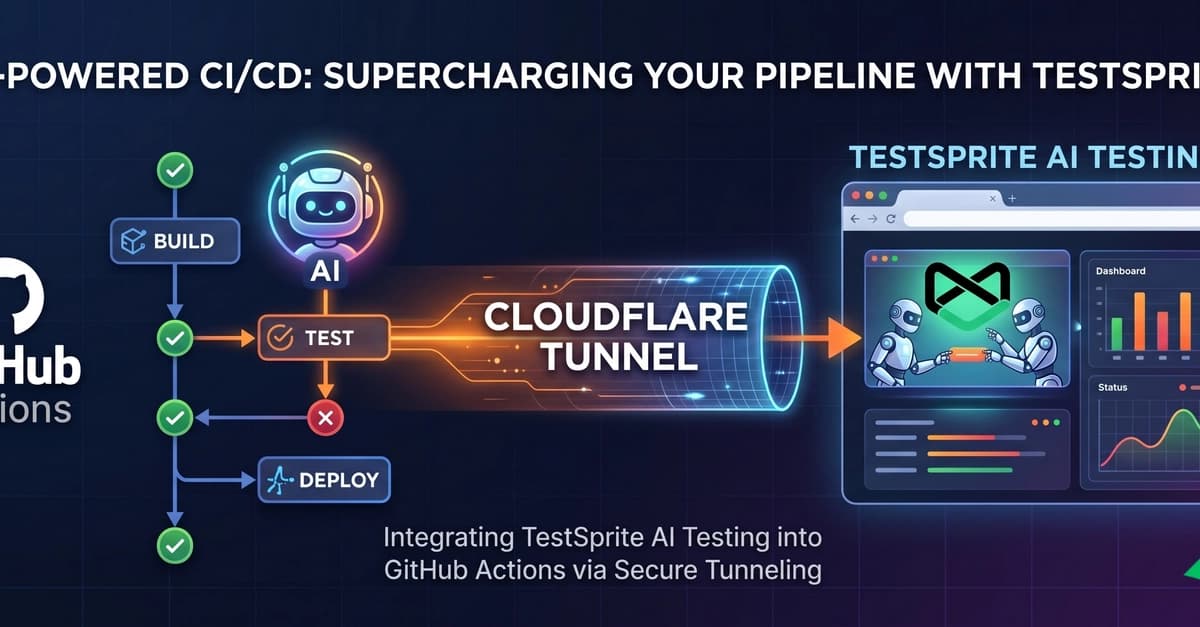

Supercharging Your CI/CD: Integrating TestSprite AI Testing with GitHub Actions

Dev.to

The ULTIMATE Guide to AI Voice Cloning: RVC WebUI (Zero to Hero)

Dev.to

Kiwi-chan Devlog #007: The Audit Never Sleeps (and Neither Does My GPU)

Dev.to