The Parallelization Trap: Why Running More Agents Simultaneously Often Makes Things Worse

Every system designer eventually reaches the same conclusion: if one agent can do X, then two agents should do 2X. And four agents should do 4X. This intuition is seductively logical. It's also wrong more often than it's right.

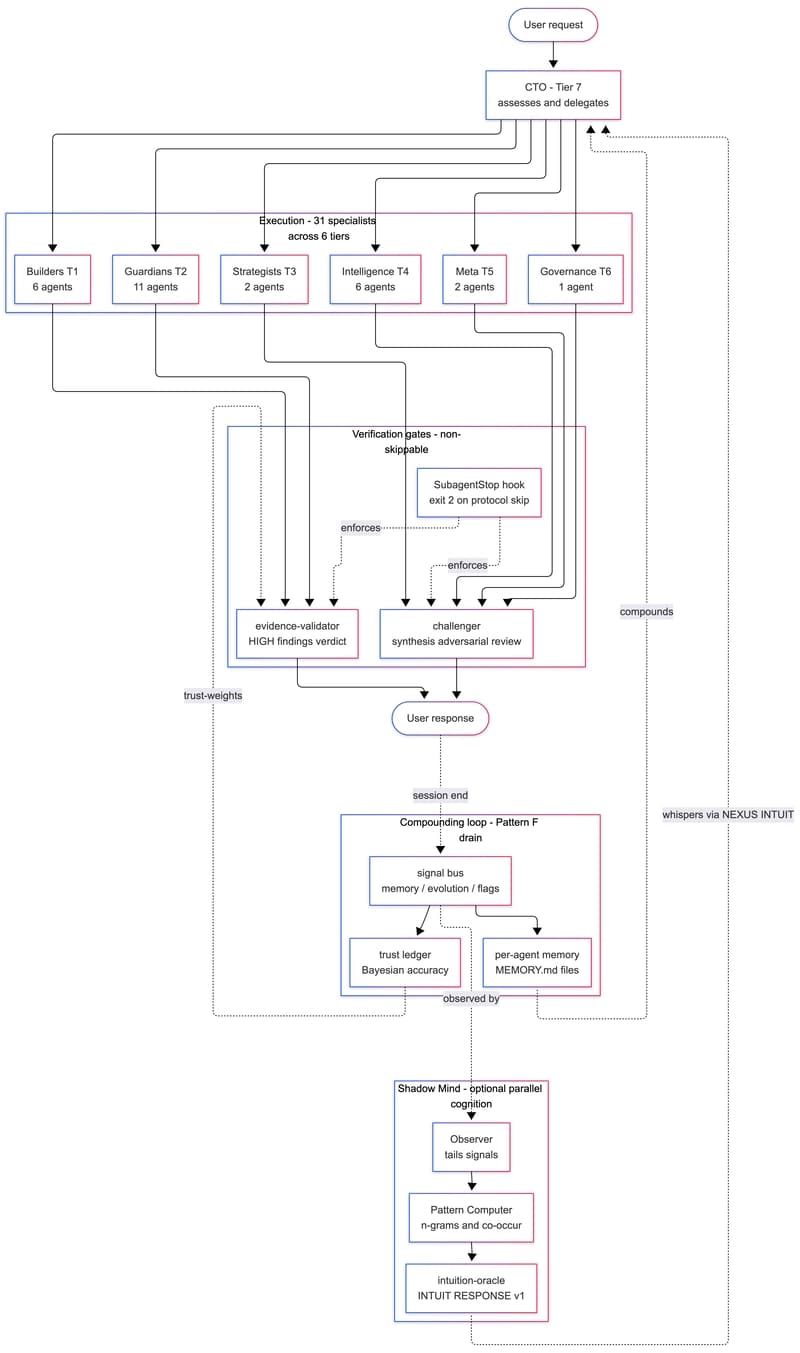

The parallelization trap is the phenomenon where adding concurrent agent capacity reduces overall system throughput instead of increasing it. It's not a resource problem — your servers can handle the load. It's a coordination and coherence problem that compounds with every agent you add.

Where the Trap Springs

The mechanism is deceptively simple. When you run agents in parallel, three things happen:

Contention on shared context. Multiple agents reading and writing to the same context window create contention. The agent that gets there first locks the coherence of the shared state. The others work with stale or conflicting information, producing outputs that contradict each other.

Nondeterministic sequencing of effects. Agent A and Agent B both operate on the world. Agent A creates a file. Agent B reads that file and makes a decision based on it — but Agent B's read happens before Agent A's write completes. Now you have an agent operating on a false state, and the error propagates downstream in ways that are hard to trace.

Reward signal dilution. When you have one agent, the reward signal (whatever feedback loop you use to shape behavior) is clear and direct. Add ten agents and the reward signal gets distributed. Agents optimize for local outcomes that may conflict with global objectives. You've built a system of actors each optimizing for something different, and the emergent behavior is worse than if you'd run a single agent sequentially.

The Example That Makes This Concrete

Suppose you have a data processing pipeline. Three agents: Agent Parser, Agent Enricher, Agent Validator. Sequential execution: Agent Parser finishes, then Agent Enricher takes Parser's output, then Agent Validator checks Enricher's work.

Now run them in parallel. Agent Parser starts. Agent Enricher starts — but Enricher is now pulling from Parser's in-progress output. Agent Validator fires — but it's checking a half-written Enricher output. The Validator flags errors that don't exist. The Enricher receives a contradictory correction and recalculates. Now Parser is confused because Enricher sent back a state that doesn't align with what Parser wrote.

The final output is worse than sequential execution. And you've used 3x the compute.

The Real Constraint Is Not CPU

The trap assumes the bottleneck is compute. In most agent systems, it isn't. The bottleneck is coherence — the degree to which every agent shares a consistent view of the world state. Parallelism only helps when coherence is maintained. When it breaks, you don't get speed. You get a different kind of chaos.

This is why workflow engines with strong sequencing guarantees (dependency graphs, DAGs, explicit state machines) outperform loose agent collectives on complex tasks. The order is the point.

When Parallelization Actually Works

To be clear: parallelization works when the work is embarrassingly parallel — when agents operate on truly independent slices of state. Image processing. Independent data extraction. Parallel calls to external APIs with no shared state.

It fails when agents must coordinate, when decisions in one thread affect the validity of decisions in another, and when the output must form a coherent whole rather than a collection of independent artifacts.

The rule of thumb: if two agents need to know the same thing to do their job correctly, they cannot run in parallel without a coordination layer between them.

The Practical Test

Before parallelizing any agent workflow, ask: what happens if Agent B runs before Agent A completes?

If the answer is "Agent B fails or produces garbage," you have a dependency. Treat it as one. Use a sequencer, a queue, or a dependency graph. Don't parallelize past the coherence boundary.

The trap is seductive because parallelism feels like sophistication. But sophistication without understanding is just expensive confusion.