EditPropBench: Measuring Factual Edit Propagation in Scientific Manuscripts

arXiv cs.CL / 5/5/2026

📰 NewsIdeas & Deep AnalysisModels & Research

Key Points

- The paper introduces EditPropBench, a benchmark designed to evaluate how well LLM-based editors propagate a factual change through dependent claims in scientific manuscripts.

- Each benchmark item uses a synthetic, ML/NLP-style manuscript paired with a targeted edit and a fact graph annotated at the sentence level to distinguish direct targets, required downstream updates, and protected unrelated text.

- Across the benchmark’s more difficult implicit/free-form settings, results for five LLM editing systems vary substantially (ERA 0.148–0.705), and even the best system fails to capture about 30% of required cascade updates.

- Stress tests and metric analyses suggest LLM editors can outperform deterministic substitution baselines when easier, substitution-solvable cases are included, but reliable revision still needs cascade-aware verification.

- An audit of recent arXiv cs.CL papers finds fact-dependent qualitative claims appear in 37.2% of papers, underscoring the practical need for tools that handle non-local edit implications.

Related Articles

The 55.6% problem: why frontier LLMs fail at embedded code

Dev.to

Four CVEs in a week, all the same shape: when agents execute LLM-generated code

Dev.to

Healthcare AI Is Absorbing Institutional Knowledge It Can't Actually Hold

Reddit r/artificial

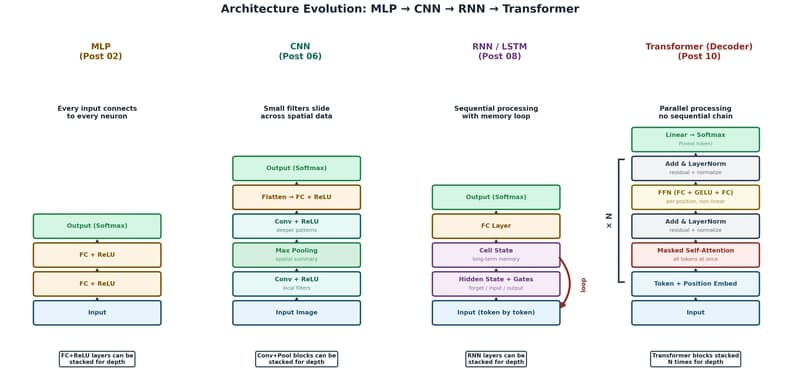

The Transformer: The Architecture Behind Modern AI

Dev.to

Foundational Models Defining a New Era in Vision: A Survey and Outlook

Dev.to