A Dual Cross-Attention Graph Learning Framework For Multimodal MRI-Based Major Depressive Disorder Detection

arXiv cs.CV / 4/14/2026

💬 OpinionIdeas & Deep AnalysisModels & Research

Key Points

- The paper introduces a dual cross-attention multimodal fusion framework that models bidirectional interactions between structural MRI (sMRI) and resting-state fMRI (rs-fMRI) for major depressive disorder (MDD) detection.

- Experiments on the large-scale REST-meta-MDD dataset evaluate the method with structural and functional brain atlas configurations using 10-fold stratified cross-validation.

- Results show the proposed approach delivers robust and competitive performance across atlas types and improves over simple feature-level concatenation for functional atlases.

- The best-performing model reports 84.71% accuracy, 86.42% sensitivity, 82.89% specificity, 84.34% precision, and 85.37% F1-score, highlighting the value of explicitly learning cross-modal relationships.

- The study argues that cross-modal interaction modeling is critical for multimodal neuroimaging-based classification where single-modality signals are insufficient.

Related Articles

The AI Hype Cycle Is Lying to You About What to Learn

Dev.to

Big Tech firms are accelerating AI investments and integration, while regulators and companies focus on safety and responsible adoption.

Dev.to

Inside NVIDIA’s $2B Marvell Deal: What NVLink Fusion Means for AI Ethernet Fabrics

Dev.to

Automating Your Literature Review: From PDFs to Data with AI

Dev.to

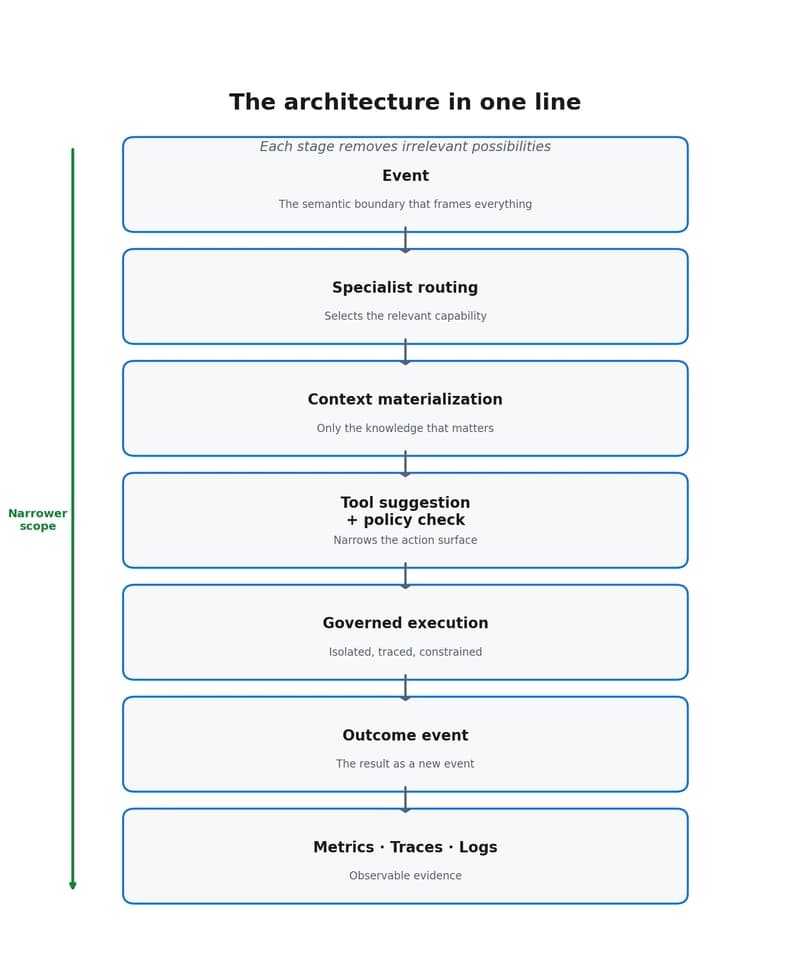

Why event-driven agents reduce scope, cost, and decision dispersion

Dev.to