HIVE: Hidden-Evidence Verification for Hallucination Detection in Diffusion Large Language Models

arXiv cs.CL / 4/30/2026

💬 OpinionModels & Research

Key Points

- Diffusion-based large language models can reveal hallucination signals at intermediate denoising steps, not just in the final generated text.

- The paper introduces HIVE, which extracts and compresses hidden evidence from denoising trajectories, selects the most informative step-layer evidence, and conditions a verifier language model using prefix embeddings.

- HIVE outputs both a continuous hallucination score (from verifier logits) and structured verification results such as hallucination types, evidence pairs, and brief rationales.

- Across two diffusion LLMs and three QA benchmarks, HIVE outperforms eight baseline methods, reaching up to 0.9236 AUROC and 0.9537 AUPRC.

- Ablation experiments show that key components—hidden-evidence conditioning, learned evidence selection, two-stream evidence representation, and step-layer embeddings—are essential to the performance gains.

Related Articles

We Built a DNS-Based Discovery Protocol for AI Agents — Here's How It Works

Dev.to

Building AI Evaluation Pipelines: Automating LLM Testing from Dataset to CI/CD

Dev.to

Function Calling Harness 2: CoT Compliance from 9.91% to 100%

Dev.to

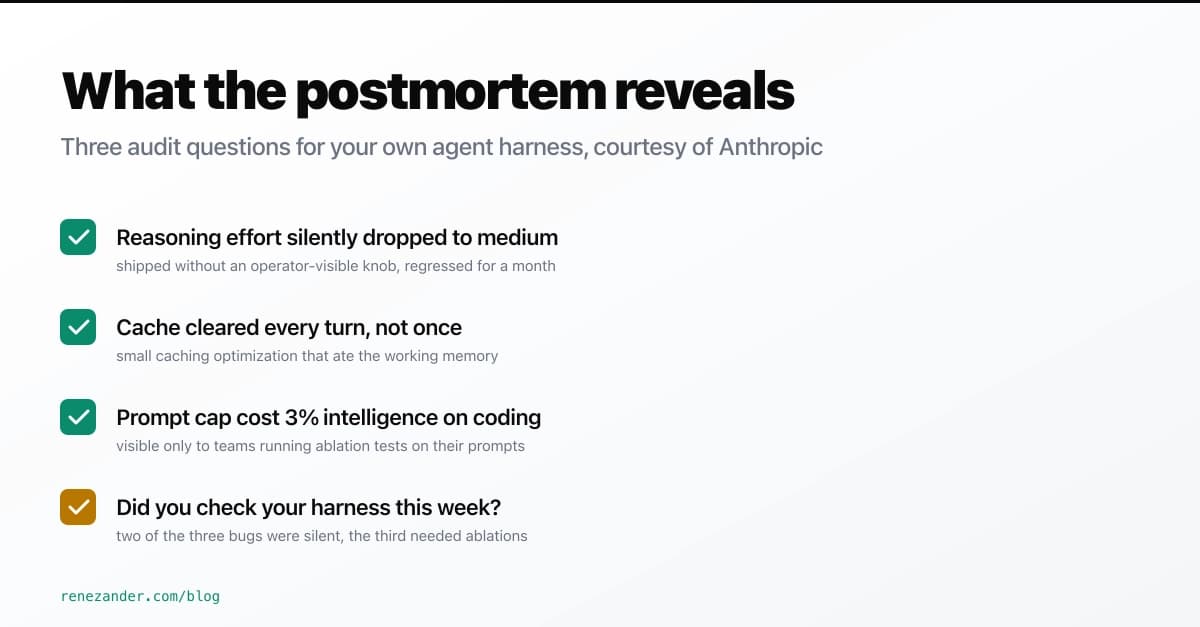

What Anthropic's April 23 Postmortem Reveals About Your Agent Harness

Dev.to

Fine-tuning YOLOv11 to detect stamps and signatures on banking documents - a practical walkthrough

Dev.to