I've started to notice that my usual setup doesn't work as well in other languages as it did in English - the model sometimes made grammar mistakes and generated genuine garbage. Its reasoning stayed in English and I preferred to leave it that way, as this is the language most LLM's are obviously most 'confident' in.

The answer to some of the problems of generating in less trained language was using lower temp. But then again, that influences reasoning, which is in English, and makes creative writing less 'creative'. Regenerating from the same context became deterministic.

So that gave me an idea - what if, based on the previous token generated, samplers swapped mid-generation? Basically the same as doing two API calls, one for thinking with one sampler preset, and the next (with thinking in the context) with other sampler preset. However, instead of doing it by hand, you just write a check in code.

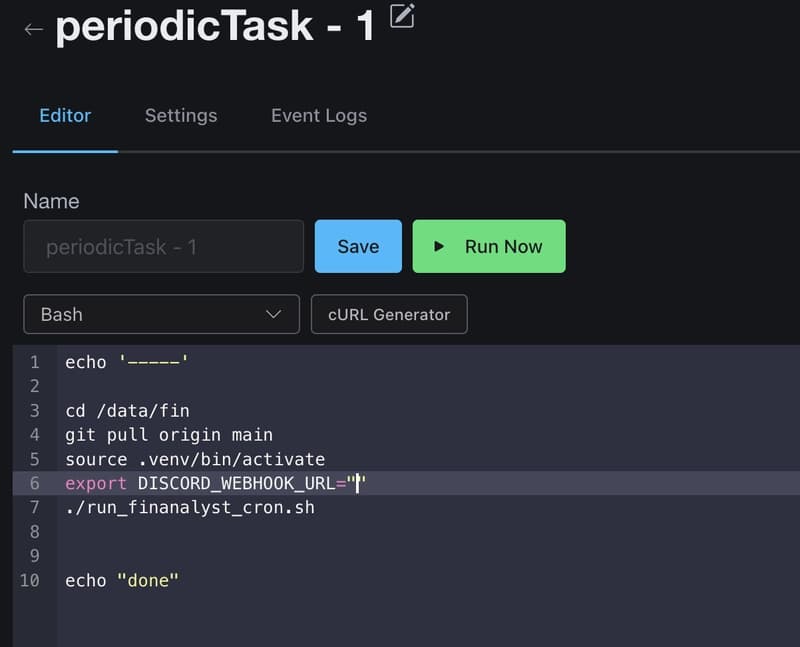

So I pulled llamacpp repository and (kinda) implemented it in with a few lines from Claude. The concept is hacky and very simple, you'd need to pass a few additional API arguments:

"thinking_sampler_override": true,

"thinking_top_k": 128,

"thinking_temp": 0.0,

"thinking_min_p": 0.05,

llamacpp 'ignores' every other sampler you have and samples everything that is between thinking tokens only with these samplers. Surprisingly it worked almost right off the bat and provided some weird results. For example, on Gemma 4:

temp 1 for thinking + temp 0.0 for output: Best grammar in Ukrainian language so far, random and non-deterministic compared to temp 0 for everything

temp 0 for thinking + temp 1 for output: Is also varied between generations. Grammar is still a bit noisy but probably nice for writing in English(?)

That also makes me wonder how other, more complex samplers would react and work with this. Unfortunately I don't have a lot of time or knowledge in this area, so I can only comment on what I experienced.

Edit: Not saying this is anything, but perhaps having more control over samplers at runtime could be beneficial, instead of tweaking them before each generation?

[link] [comments]