| Hello, This model/quant is my daily driver and I wanted to have some reference benchs for comparing my setup with a 3x more expensive and 4x time power hungry setup. Results first, methodology after, link at the end with all results Model: cyankiwi/MiniMax-M2.7-AWQ-4bit Results (c1)(tried to upload the table as text, didn't work as expected) So to my surprise, the Spark cluster isn't that far behind. On average the 2x RTX 6000 is 2.7x faster on prompt processing and 4.88x faster on token generation ; for a price difference of around 2.9x. Power consumption is very close (reported back to 1M tokens), and at $0.10/kWh, you get: (you can change your energy price on the link I added) Results (c2)At two requests in parallel, it gets a bit weird (all benchs at each context size are run 3 times and averaged) Well, I don't have all the explanations, you tell me if I'm doing something wrong haha. But yeah with parallel high contexts, we're hitting the limit of what the KV-cache can handle at once, so requests get throttled and that destroys the perfs. RunPod config

cyankiwi/MiniMax-M2.7-AWQ-4bit --host 0.0.0.0 --port 8000 --tensor-parallel-size 2 --gpu-memory-utilization=0.95 --trust-remote-code --kv-cache-dtype fp8_e4m3 --enable-auto-tool-choice --tool-call-parser minimax_m2

Spark config

Using this recipe: https://github.com/eugr/spark-vllm-docker/blob/main/recipes/minimax-m2.7-awq.yaml (tweaked with fp8 KV-cache), launched with Benchmark(I tested with more concurrency, but I focused my analysis on 1 and 2 concurrent requests, results available here: https://nicefox.net/benchmarks/minimax-m2.7-awq-4bit/benchmarks_concurrency.md ) ConclusionWell... Prefill is only 2.7x time faster, and token generation is 4.9x faster, and both setup display similar energy efficiency. My bet is that the Max-Q version would be very energy efficient. The main difference is the Spark cluster is my daily driver, so I spent time making it better and ensuring I had the best setup possible ; while for the RTX 6000 I "just" launched the vllm image from RunPod with the same parameters, but I know there is optimization to be done. I'm very interested in the 2x RTX 6000 setup because I'm working with a small company to set it up properly on-prem for their devs, so I'm happy to re-bench with other params if people give me a better setup for it. You can find more details here (it's just the data compiled): https://nicefox.net/benchmarks/minimax-m2.7-awq-4bit/ [link] [comments] |

MiniMax M2.7 AWQ-4bit on 2x Spark vs 2x RTX 6000 96GB - performance and energy efficiency

Reddit r/LocalLLaMA / 5/2/2026

💬 OpinionDeveloper Stack & InfrastructureSignals & Early TrendsIndustry & Market MovesModels & Research

Key Points

- The post compares the performance and energy efficiency of running MiniMax M2.7 AWQ 4-bit on a 2x Spark cluster versus a 2x RTX 6000 96GB setup using published benchmark results and linked test data.

- Despite the RTX 6000 configuration being significantly more expensive, the Spark cluster is reported to be relatively close, with the 2x RTX 6000 achieving about 2.7x faster prompt processing and about 4.88x faster token generation.

- The author estimates the price difference between the two setups at roughly 2.9x, framing the Spark cluster as a cost-performance alternative rather than a direct speed substitute.

- Reported power consumption is very similar between the two approaches when normalized to 1M tokens, suggesting comparable energy draw for the workload despite different throughput.

- Using an electricity rate of $0.10/kWh, the post provides a way to estimate total energy cost per workload based on the measured power figures, emphasizing practical deployment economics.

Related Articles

Black Hat USA

AI Business

Every handle invocation on BizNode gets a WFID — a universal transaction reference for accountability. Full audit trail,...

Dev.to

I tracked my referral sources for 30 days. AI chatbots are beating Google.

Dev.to

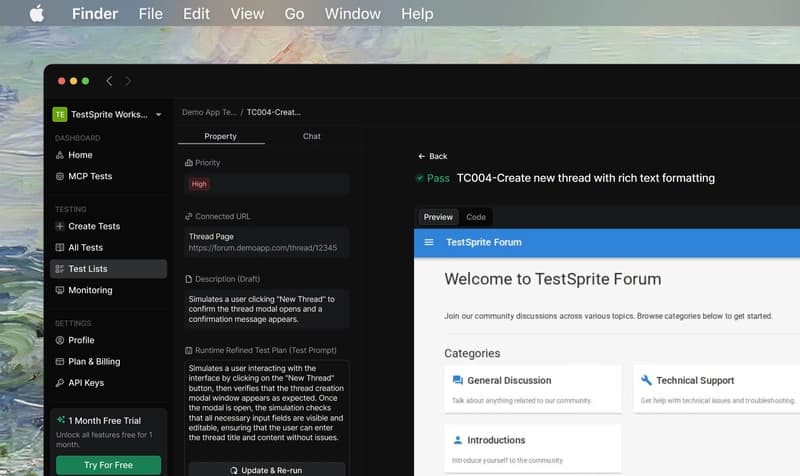

TestSprite: Review Mendalam dari Developer Indonesia — Lokalisasi, Tanggal, dan Mata Uang Rupiah

Dev.to

When AI Agents Trade Autonomously: Building Economic Actors That Never Sleep

Dev.to