EuropeMedQA Study Protocol: A Multilingual, Multimodal Medical Examination Dataset for Language Model Evaluation

arXiv cs.CL / 4/17/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- The EuropeMedQA study protocol introduces a new multilingual, multimodal medical examination dataset built from official regulatory exams across Italy, France, Spain, and Portugal.

- It targets a key gap in current LLM medical evaluations by covering non-English performance drops and multimodal diagnostic/visual reasoning tasks.

- The protocol specifies a rigorous data curation process aligned with FAIR data principles and SPIRIT-AI guidelines, along with an automated translation pipeline for cross-language comparison.

- It plans to evaluate contemporary multimodal LLMs using a zero-shot, strictly constrained prompting approach to measure cross-lingual transfer and visual reasoning.

- The benchmark is designed to be contamination-resistant and more representative of European clinical practices to support development of more generalizable medical AI.

Related Articles

langchain-anthropic==1.4.1

LangChain Releases

Stop burning tokens on DOM noise: a Playwright MCP optimizer layer

Dev.to

Talk to Your Favorite Game Characters! Mantella Brings AI to Skyrim and Fallout 4 NPCs

Dev.to

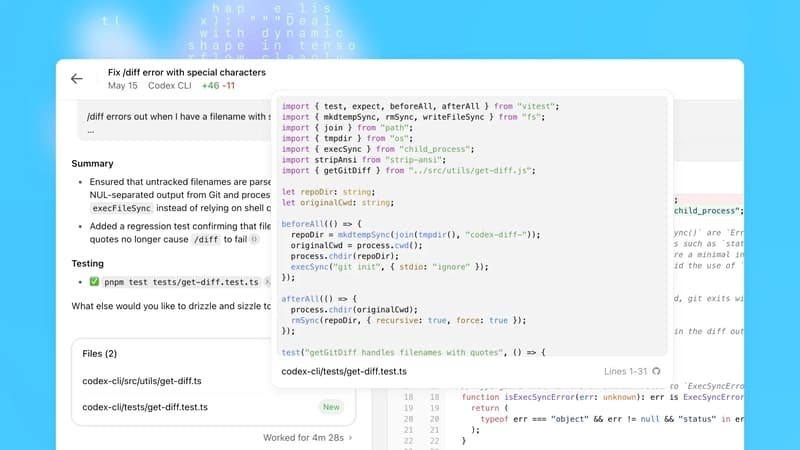

OpenAI Codex Update Adds macOS Agent, Browser, Memory; 3M Weekly Users

Dev.to

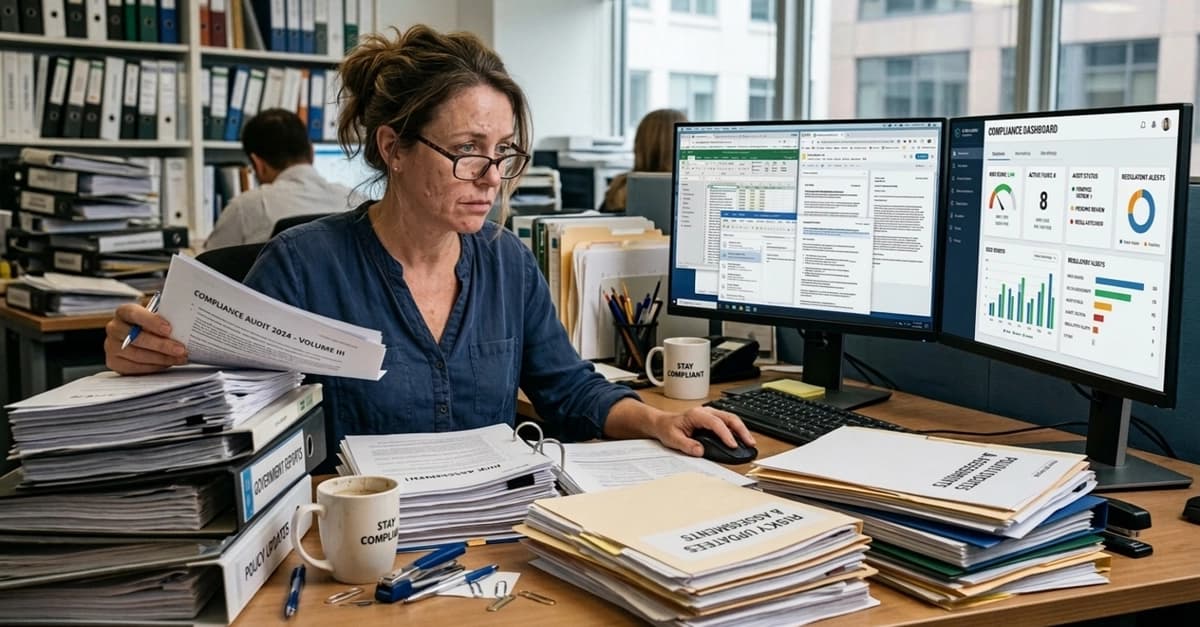

How Data Science Is Used to Predict User BeReducing Human Error in Compliance With AI Technology havior

Dev.to