SCOPE: Signal-Calibrated On-Policy Distillation Enhancement with Dual-Path Adaptive Weighting

arXiv cs.LG / 4/14/2026

💬 OpinionSignals & Early TrendsIdeas & Deep AnalysisModels & Research

Key Points

- SCOPE addresses a key limitation in On-Policy Distillation by calibrating token-level KL supervision according to the quality of on-policy signals rather than applying uniform weighting across rollouts.

- The method splits rollouts into two paths: incorrect trajectories receive teacher-perplexity-weighted KL distillation to emphasize cases where the teacher can reliably correct, while correct trajectories use student-perplexity-weighted MLE to focus learning on borderline, low-confidence examples.

- SCOPE further stabilizes learning via group-level normalization that adjusts weight distributions across prompts with varying intrinsic difficulty.

- Experiments on six reasoning benchmarks report consistent gains, including an average relative improvement of 11.42% on Avg@32 and 7.30% on Pass@32 versus competitive baselines.

- Overall, the paper proposes a training-time routing and adaptive weighting strategy to improve reasoning alignment under sparse, outcome-level rewards typical of on-policy RL setups.

Related Articles

Small NSFW model for chatbot

Reddit r/LocalLLaMA

ChatGPT for Nurses: Prompts That Help You Document, Communicate, and Study

Dev.to

I Added a Stopwatch to My AI in 1 LOC Using the Livingrimoire While Corporations Need a Year

Dev.to

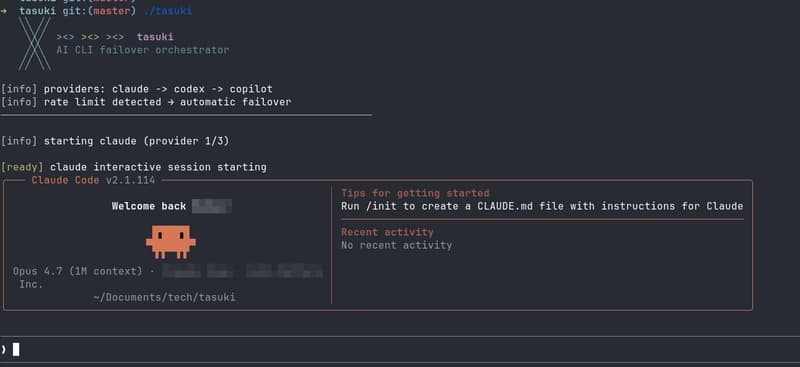

Built tasuki — an AI CLI Orchestrator that Seamlessly Hands Off Between Tools

Dev.to

I built a GNOME extension for Codex with local/remote history, live filters, Markdown export, and a read-only MCP server

Reddit r/artificial