Planning to Explore: Curiosity-Driven Planning for LLM Test Generation

arXiv cs.CL / 4/8/2026

💬 OpinionIdeas & Deep AnalysisModels & Research

Key Points

- The paper addresses how LLM-based code test generation can plateau under greedy strategies that prioritize immediate coverage gains but fail to plan for deep branches requiring preparatory setup steps.

- It proposes a curiosity-driven planning approach, CovQValue, that models the program’s branch structure as an unknown environment and uses an evolving coverage map as a proxy posterior informed by Bayesian exploration principles.

- CovQValue integrates the coverage map back into the LLM, generates multiple candidate action plans in parallel, and selects plans using LLM-estimated Q-values to balance short-term discovery with longer-term reachability.

- Experiments on TestGenEval Lite show 51–77% higher branch coverage versus greedy selection across three popular LLMs, and success on 77–84% of targets, with iterative generation evaluated via a new benchmark RepoExploreBench.

- The results suggest that sequential, curiosity-driven exploration can more effectively discover program behavior than coverage-only heuristics for LLM-guided test generation.

Related Articles

Research with ChatGPT

Dev.to

Silicon Valley is quietly running on Chinese open source models and almost nobody is talking about it

Reddit r/LocalLLaMA

Why AI Product Quality Is Now an Evaluation Pipeline Problem, Not a Model Problem

Dev.to

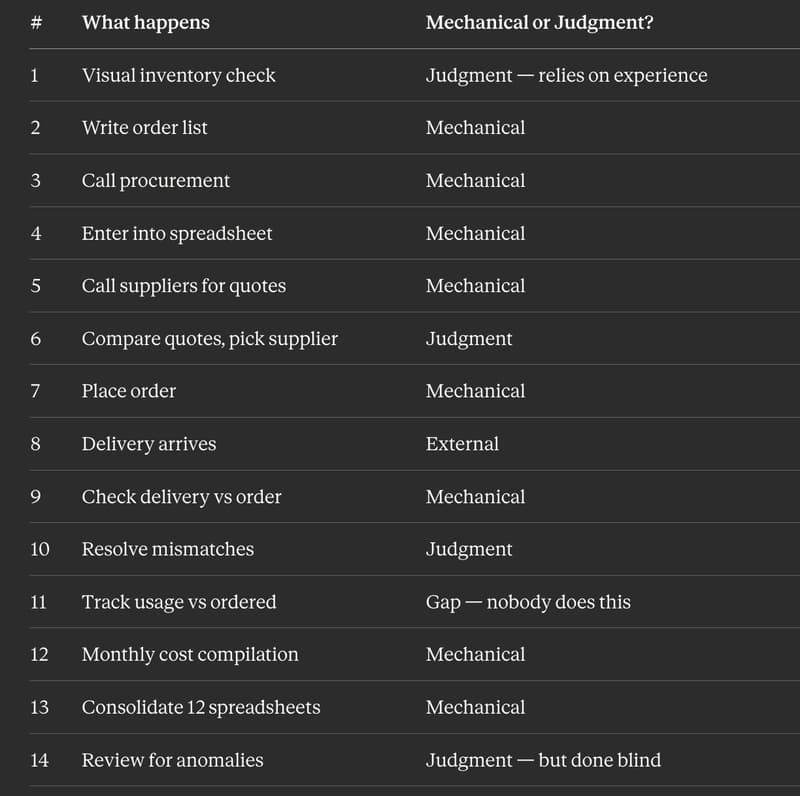

I Replaced 12 Kitchen Managers Guessing "How Much Chicken Do We Need" With 3 ML Models. Here's the Entire Architecture.

Dev.to

AI Model Router API - REST + MCP, Free Tier

Dev.to