RAD-2: Scaling Reinforcement Learning in a Generator-Discriminator Framework

arXiv cs.CV / 4/17/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- The paper introduces RAD-2, a generator–discriminator reinforcement learning framework for closed-loop motion planning in high-level autonomous driving under multimodal uncertainty.

- It uses a diffusion-based generator to propose diverse trajectories, while an RL-optimized discriminator reranks candidates based on long-term driving quality to provide more effective negative feedback than imitation-only training.

- RAD-2 improves RL training stability and credit assignment with Temporally Consistent Group Relative Policy Optimization, and it adds On-policy Generator Optimization to turn closed-loop feedback into structured optimization signals that guide the generator toward high-reward trajectories.

- For scalable training and evaluation, the authors propose BEV-Warp, a high-throughput simulation environment that performs closed-loop testing directly in BEV feature space via spatial warping.

- Experiments report a 56% reduction in collision rate versus strong diffusion-based planners, along with real-world gains in perceived safety and driving smoothness in complex urban traffic.

Related Articles

langchain-anthropic==1.4.1

LangChain Releases

Stop burning tokens on DOM noise: a Playwright MCP optimizer layer

Dev.to

Talk to Your Favorite Game Characters! Mantella Brings AI to Skyrim and Fallout 4 NPCs

Dev.to

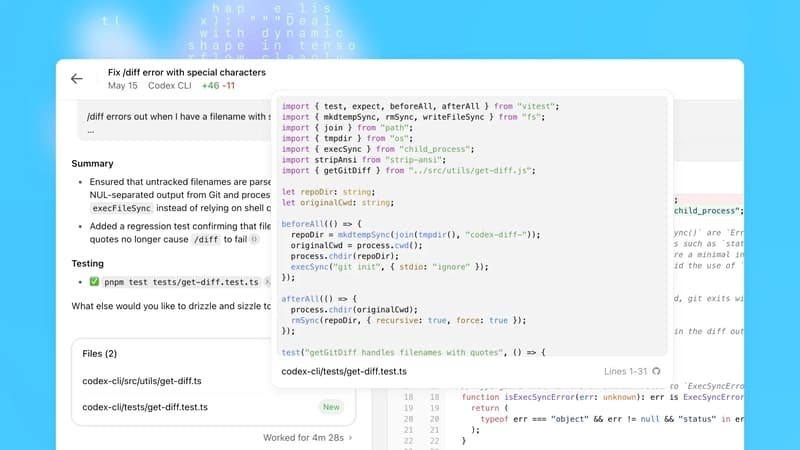

OpenAI Codex Update Adds macOS Agent, Browser, Memory; 3M Weekly Users

Dev.to

How Data Science Is Used to Predict User BeReducing Human Error in Compliance With AI Technology havior

Dev.to