SimulCost: A Cost-Aware Benchmark and Toolkit for Automating Physics Simulations with LLMs

arXiv cs.AI / 3/31/2026

💬 OpinionSignals & Early TrendsIdeas & Deep AnalysisTools & Practical UsageModels & Research

Key Points

- The paper argues that evaluating LLM agents for scientific tasks should account not only for token costs but also for tool-use costs such as simulation time and experimental resources, since common metrics like pass@k fail under realistic budgets.

- It introduces SimulCost, a cost-aware benchmark and open-source toolkit for physics simulations, covering 2,916 single-round initial-guess tasks and 1,900 multi-round trial-and-error adjustment tasks across 12 simulators in fluid dynamics, solid mechanics, and plasma physics.

- The study uses analytically defined, platform-independent cost models per simulator to compare LLM-driven cost-sensitive parameter tuning against traditional scanning in both accuracy and computational expense.

- Results show frontier LLMs achieve 46–64% success in single-round mode (dropping to 35–54% for high accuracy), while multi-round improves to 71–80% but is 1.5–2.5× slower than scanning, making LLM approaches potentially uneconomical despite accuracy gains.

- The authors further analyze parameter group correlations for knowledge transfer, and evaluate how in-context examples and reasoning effort affect performance, publishing code and data to enable extensions to new simulation environments.

Related Articles

Black Hat Asia

AI Business

How to Verify Information Online and Avoid Fake Content

Dev.to

I built an AI code reviewer solo while working full-time — honest post-launch breakdown

Dev.to

Mobile App MVP: Build, Launch, and Validate in Under a Week

Dev.to

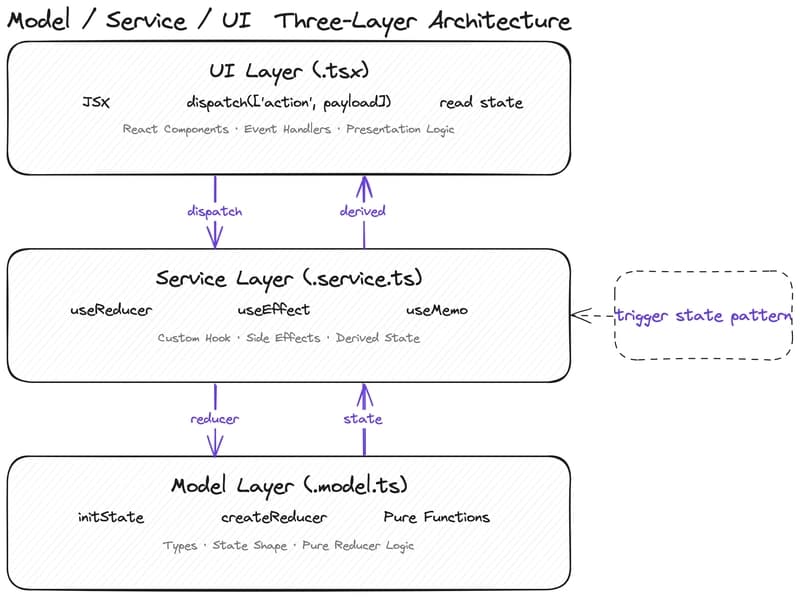

Why Your State Management Is Slowing Down AI-Assisted Development

Dev.to