Robust Parameter Learning for Uncertain MDPs

arXiv cs.LG / 5/5/2026

📰 NewsIdeas & Deep AnalysisModels & Research

Key Points

- The paper targets learning and verification of unknown Markov decision processes (MDPs) under transition uncertainty, where existing methods often treat each transition probability’s uncertainty independently.

- It introduces parametric MDPs (pMDPs), representing transition probabilities as expressions over shared parameters, so learned uncertainty properly captures algebraic dependencies among transitions.

- The authors map (project) uncertainty from observed transition frequencies into the pMDP parameter space to produce a PAC-style uncertainty model for the underlying MDP.

- Because solving the resulting induced confidence set is algorithmically difficult, they propose a hierarchy of sound polytopic outer approximations to make the confidence set tractable.

- Experiments show the proposed approach yields substantially tighter uncertainty estimates than classical interval-based uncertain MDP learning techniques.

Related Articles

The 55.6% problem: why frontier LLMs fail at embedded code

Dev.to

Four CVEs in a week, all the same shape: when agents execute LLM-generated code

Dev.to

Healthcare AI Is Absorbing Institutional Knowledge It Can't Actually Hold

Reddit r/artificial

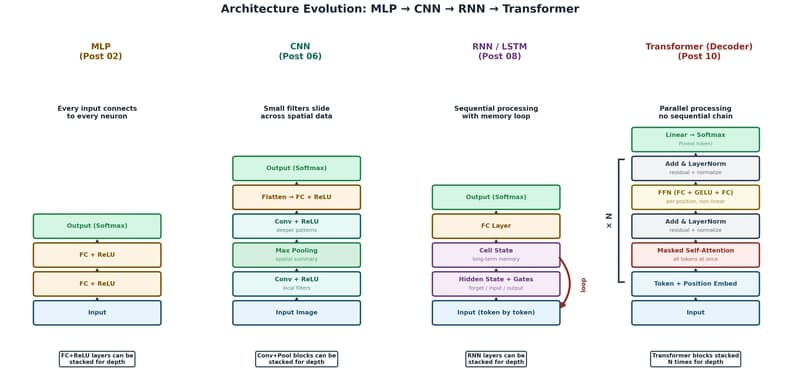

The Transformer: The Architecture Behind Modern AI

Dev.to

Foundational Models Defining a New Era in Vision: A Survey and Outlook

Dev.to