| Every LLM conversation starts from zero. RAG helps, but it can't learn from what's happening right now. MDA is my attempt to fix that. MDA encodes knowledge as associative entity networks, updates in real-time via the Oja rule (no backprop, no reindexing), and retrieves context by activating concept graphs rather than similarity search. Runs CPU-first, model-agnostic, works with Ollama/OpenAI/Anthropic out of the box. Available as an MCP server, with GPU acceleration support for batch workloads. One thing I find genuinely interesting: multiple agents can share the same MDA instance and reason over a common memory. Agent A learns something, Agent B picks it up through associative traversal, not by searching for it, but because the concept network connects them. It starts to feel less like retrieval and more like shared intuition. On the benchmark: these numbers come from synthetic questions I wrote myself, not a community-constructed eval. Take them as directional, not definitive. MDA is not a RAG killer the goal is to cover what RAG and LLMs leave on the table, not replace them. If you run your own tests and find different results, I'd genuinely want to hear it.

Inference model: Qwen3 6-35B-A3B / Judge model: Claude Haiku If you'd like to share what you think MDA does well or where it falls short, I'd love to hear it. Source code: https://github.com/rangle2/mda [link] [comments] |

Persistent memory system for LLMs that actually learns mid-conversation

Reddit r/LocalLLaMA / 5/3/2026

💬 OpinionDeveloper Stack & InfrastructureTools & Practical UsageModels & Research

Key Points

- The article describes “MDA,” a persistent memory approach for LLMs aimed at learning during an ongoing conversation rather than starting from scratch each turn.

- MDA represents knowledge as associative entity networks that update in real time using the Oja rule, avoiding backpropagation and reindexing.

- Instead of similarity-based retrieval (like standard RAG), MDA retrieves context by activating concept graphs for more connection-based reasoning.

- The system is presented as model-agnostic and CPU-first, supports multiple LLM providers out of the box (e.g., Ollama/OpenAI/Anthropic), and is delivered as an MCP server with GPU acceleration for batch workloads.

- The author reports synthetic benchmark results where MDA outperforms RAG on overall accuracy and especially on long-turn retention, and claims multiple agents can share a common memory instance for shared reasoning.

Related Articles

Black Hat USA

AI Business

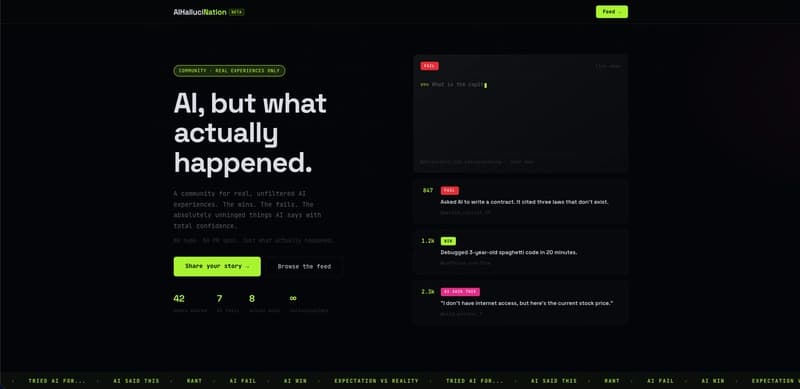

I used AI to moderate AI content — here's what I learned building AIHallucination

Dev.to

Stop Googling Prompts — Here's the Freelancer AI Toolkit That Actually Works

Dev.to

AI Powered Scheduling for Field Operations by Pablo M. Rivera

Dev.to

AI Deleted My Tests and Said 'All Tests Pass' — A Horror Story from Porting 'typia' from TypeScript to Go

Dev.to