Anthropic struggling with Chinese competition, its own safety obsession

The maker of Claude faces headwinds as it rushes to go public

Anthropic, riding a wave of goodwill after resisting demands from the US Defense Department to soften model safeguards, is reportedly planning to go public as soon as Q4 2026.

That may not be soon enough to avoid the undertow of financial pressure, competition from China, and the challenge of delivering AI models that provide some measure of safety without sacrificing too much utility.

The company's financial picture isn't pretty. In a legal filing [PDF] earlier this month, CFO Krishna Rao revealed that the company, which has raised $30 billion, has only managed to make $5 billion while spending $10 billion on inference and training alone.

Against this backdrop, recent cost-saving moves designed to reduce token demand during peak hours fail to inspire optimism.

But there's a more fundamental risk – remaining relevant in the face of increasingly capable competition from China.

On Monday, the US-China Economic and Security Review Commission issued a report assessing the competitive threat posed by Chinese AI companies. "Chinese labs have narrowed performance gaps with top Western large language models," the report says. "They have also developed key architectural and training advances that are now industry standards."

The success of Chinese AI companies can be seen in the popularity metrics of sites like LLM Rankings, which tracks the most popular models on OpenRouter, an API and marketplace for providing developers with access to multiple AI models through a single interface.

Presently, the top six models in that ranking come from Chinese AI companies. They include: MiMo-V2-Pro (Xiaomi), Step 3.5 Flash (stepfun), DeepSeek V3.2 (DeepSeek), MiniMax M2.7 (MiniMax), MiniMax M2.5 (MiniMax), and GLM 5 Turbo (z.ai).

Anthropic's Claude Opus 4.6 and Claude Sonnet 4.6 currently occupy slots seven and eight.

Perhaps more significantly, Anthropic has seen its market share slip from 29.1 percent on March 22, 2025 to 13.3 percent on March 21, 2026.

That's only one measurement and Anthropic has been doing well in the enterprise market, enough to worry rival OpenAI.

But absent US government protectionism, the US AI biz faces rivals who deliver similar results for one tenth of the price or less. When Kilo Code compared the cost of Claude 4.6 Opus to MiniMax M2.7 earlier a few days ago, it found "MiniMax M2.7 delivered 90 percent of the quality for 7 percent of the cost ($0.27 total vs $3.67)."

Anthropic claims that MiniMax, Moonshot AI, and DeepSeek copied or "distilled" its Claude models (which were themselves built from content often copied without consent).

But given the underwhelming track record of US efforts to encourage Chinese respect for US intellectual property, it seems doubtful Anthropic's appeal for "a coordinated response across the AI industry, cloud providers, and policymakers" will be enough to sustain the pricing needed to reach positive cash flow in a reasonable time frame.

- Folk are getting dangerously attached to AI that always tells them they're right

- Apple's last tower topples… and the others will follow

- Anthropic tweaks timed usage limits to discourage Claude demand during peak hours

- Using AI to code does not mean your code is more secure

Finally, Anthropic faces the challenge of being all things to all customers. The company has built its brand around safety, and has won over many corporate customers and consumers as a result. But it has alienated the current US administration and its effort to maintain model safety risks pushing away the security community and developers who do security work.

The Register has corresponded with a handful of security researchers who all expressed disillusionment with how the Claude model family has performed for bug hunting and exploit testing in recent months.

"It's very, very, very heavily censored now," said one security researcher who asked not to be identified in a conversation with The Register. "The CBRN (Chemical, Biological, Radiological, and Nuclear) blocker has been cranked way up. …We're all abandoning it as now it's triggering a stupid number of false positives."

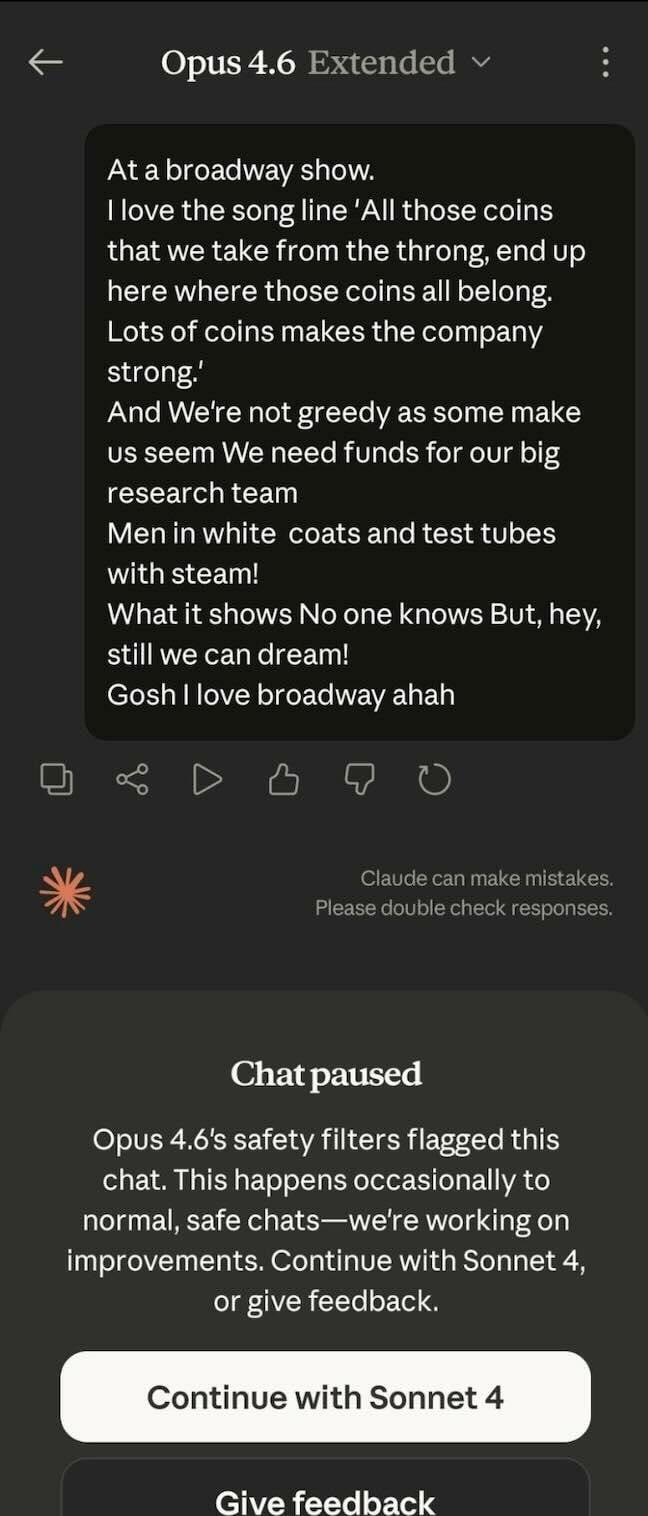

To demonstrate the model's hypersensitivity, we were provided with a screenshot showing just how sensitive Opus can be – it flagged a chat about Tony-award winning musical Urinetown as unsafe.

Anthropic confirmed to The Register that there have been security-focused changes, pointing to safeguards added with the release of Opus 4.6 in February.

"As part of our ongoing safety commitments as described in our Claude Opus 4.6 announcement, we are rolling out new cyber safeguards for Claude Opus 4.6," the company's documentation explains. "These safeguards are designed to automatically detect and block requests that may indicate prohibited cybersecurity usage under our Usage Policy."

The company concedes, "In some cases, these guardrails may also block dual-use cybersecurity activities with legitimate defensive purposes, such as vulnerability discovery."

Indeed, there are people posting on social media who claim to have run afoul of these guardrails for security-related work.

Anthropic does provide a form that security professionals can use to petition for an exemption, but from what we're told, not everyone who applies gets cleared and the process is not quick.

The researcher, who claims to have just cancelled a $200/month Max subscription, reported knowing around seven people who have ditched Claude recently over its increased rate of refusal for security and vulnerability work.

One such person we were referred to echoed this sentiment. "Yes, as of late it seems that US firms have gone a bit too far in attempting to make their services 'helpful, harmless, and honest,'" we were told. "I've noticed Claude not just refusing to answer questions but actively avoiding topics and attempting to steer the conversation away from certain topics even in a research context. Security research is especially difficult."

This individual views the lack of transparency by US commercial AI companies as a problem. "They say it's an existential threat but then demand unaccountable control of them?"

A third researcher who corresponded with The Register said, "At the moment what I'm using is this new thing called MiniMax and it's a distilled version of Claude. Doesn't matter that it's Chinese. It's cheap and as good as, if not better, than Claude's best models right now."

While Anthropic prepares to go public, at least some of the public is going elsewhere. ®

More about

More about

Narrower topics

- Accessibility

- AdBlock Plus

- AIOps

- App

- Application Delivery Controller

- Audacity

- Confluence

- Database

- DeepSeek

- Devops

- FOSDEM

- FOSS

- Gemini

- Google AI

- GPT-3

- GPT-4

- Grab

- Graphics Interchange Format

- IDE

- Image compression

- Jenkins

- Large Language Model

- Legacy Technology

- LibreOffice

- Machine Learning

- Map

- MCubed

- Microsoft 365

- Microsoft Office

- Microsoft Teams

- Mobile Device Management

- Neural Networks

- NLP

- OpenOffice

- Programming Language

- QR code

- Retrieval Augmented Generation

- Retro computing

- Search Engine

- Software Bill of Materials

- Software bug

- Software License

- Star Wars

- Tensor Processing Unit

- Text Editor

- TOPS

- User interface

- Visual Studio

- Visual Studio Code

- WebAssembly

- Web Browser

- WordPress

Broader topics

More about

More about

More about

Narrower topics

- Accessibility

- AdBlock Plus

- AIOps

- App

- Application Delivery Controller

- Audacity

- Confluence

- Database

- DeepSeek

- Devops

- FOSDEM

- FOSS

- Gemini

- Google AI

- GPT-3

- GPT-4

- Grab

- Graphics Interchange Format

- IDE

- Image compression

- Jenkins

- Large Language Model

- Legacy Technology

- LibreOffice

- Machine Learning

- Map

- MCubed

- Microsoft 365

- Microsoft Office

- Microsoft Teams

- Mobile Device Management

- Neural Networks

- NLP

- OpenOffice

- Programming Language

- QR code

- Retrieval Augmented Generation

- Retro computing

- Search Engine

- Software Bill of Materials

- Software bug

- Software License

- Star Wars

- Tensor Processing Unit

- Text Editor

- TOPS

- User interface

- Visual Studio

- Visual Studio Code

- WebAssembly

- Web Browser

- WordPress